We have a Redis-cluster with Master-slave replication and Sentinel, see the Redis: replication, part 2 – Master-Slave replication, and Redis Sentinel, Redis: fork – Cannot allocate memory, Linux, virtual memory and vm.overcommit_memory, and Redis: main configuration parameters and performance tuning overview posts.

We have a Redis-cluster with Master-slave replication and Sentinel, see the Redis: replication, part 2 – Master-Slave replication, and Redis Sentinel, Redis: fork – Cannot allocate memory, Linux, virtual memory and vm.overcommit_memory, and Redis: main configuration parameters and performance tuning overview posts.

The system worked great until we started using it much more actively.

Contents

Redis info

A few useful troubleshooting commands.

To check all keys in all database use the INFO command:

[simterm]

admin@bttrm-production-console:~$ redis-cli -h 10.0.3.105 -p 6389 INFO keyspace # Keyspace db0:keys=5,expires=0,avg_ttl=0 db4:keys=16988,expires=2698,avg_ttl=30025662 db5:keys=138713,expires=90388,avg_ttl=7435947

[/simterm]

And keys size – with the bigkeys command:

[simterm]

admin@bttrm-production-console:~$ redis-cli -h 10.0.3.105 -p 6389 -n 5 --bigkeys ... [00.00%] Biggest string found so far 'projectname_aba3b5bf1af24246b465826658b3c375' with 45 bytes ... [38.56%] Biggest string found so far 'projectname_44bb0e2dd7f4fabf41ee09001791bdc4' with 4627517 bytes -------- summary ------- Sampled 150726 keys in the keyspace! Total key length in bytes is 6782670 (avg len 45.00) Biggest string found 'projectname_44bb0e2dd7f4fabf41ee09001791bdc4' has 4627517 bytes

[/simterm]

The usfull option --stat:

[simterm]

root@bttrm-production-console:/home/admin# redis-cli -h 10.0.3.105 -p 6389 --stat -i 2 ------- data ------ --------------------- load -------------------- - child - keys mem clients blocked requests connections 10290781 3.80G 12 0 37279511 (+0) 79301 ... 10292946 3.81G 10 0 37283233 (+701) 79502 ...

[/simterm]

And a monitor:

[simterm]

root@bttrm-production-console:/home/admin# redis-cli -h 10.0.3.105 -p 6389 monitor

...

1582037638.965389 [5 10.0.3.92:58594] "SET" "projectname_e7e0a3ed5251cb36e2925957981e9faa" "a:2:{***}

1582037638.967345 [5 10.0.3.71:40906] "SET" "projectname_80d9171937e4463d0238bcc42a54aead" "a:2:{***}

1582037638.967411 [5 10.0.3.71:40684] "SET" "projectname_e8b81cd503d87be74070aa012b22be4a" "a:2:{***}

...

[/simterm]

And slowlog:

[simterm]

10.0.3.105:6389> SLOWLOG get 2

1) 1) (integer) 9

2) (integer) 1582119750

3) (integer) 21507206

4) 1) "FLUSHDB"

2) 1) (integer) 8

2) (integer) 1582058687

3) (integer) 71653

4) 1) "PSYNC"

2) "?"

3) "-1"

[/simterm]

Also, check the latency.

Now, let’s go to the error.

The “psync scheduled to be closed ASAP for overcoming of output buffer limits” error

On one calm evening, our monitoring sends an alert that the cluster is down.

Redis master log during that issue was:

[simterm]

2244:C 17 Feb 19:48:12.464 * DB saved on disk 2244:C 17 Feb 19:48:12.527 * RDB: 370 MB of memory used by copy-on-write 1911:M 17 Feb 19:48:12.681 * Background saving terminated with success 1911:M 17 Feb 19:48:12.681 * Starting BGSAVE for SYNC with target: disk 1911:M 17 Feb 19:48:12.744 * Background saving started by pid 2397 1911:M 17 Feb 19:48:13.251 * Slave 10.0.3.71:6389 asks for synchronization 1911:M 17 Feb 19:48:13.251 * Full resync requested by slave 10.0.3.71:6389 1911:M 17 Feb 19:48:13.251 * Waiting for end of BGSAVE for SYNC 1911:M 17 Feb 19:48:15.743 # Client id=3144 addr=10.0.3.92:40313 fd=104 name= age=31 idle=31 flags=S db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=11284 oll=1203 omem=269714472 events=r cmd=psync scheduled to be closed ASAP for overcoming of output buffer limits. 1911:M 17 Feb 19:48:15.743 # Client id=4300 addr=10.0.3.71:40029 fd=110 name= age=2 idle=2 flags=S db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=11284 oll=1203 omem=269714472 events=r cmd=psync scheduled to be closed ASAP for overcoming of output buffer limits. 1911:M 17 Feb 19:48:15.754 # Connection with slave 10.0.3.92:6389 lost. 1911:M 17 Feb 19:48:15.756 # Connection with slave 10.0.3.71:6389 lost. 1911:M 17 Feb 19:48:16.251 * Slave 10.0.3.71:6389 asks for synchronization 1911:M 17 Feb 19:48:16.251 * Full resync requested by slave 10.0.3.71:6389 1911:M 17 Feb 19:48:16.251 * Can't attach the slave to the current BGSAVE. Waiting for next BGSAVE for SYNC

[/simterm]

From all the text about we are most interested in the “psync scheduled to be closed ASAP for overcoming of output buffer limits” message.

A “quick-fix” – client-output-buffer-limit

The solution is to increase the client-output-buffer-limit.

In short, it is a buffer used by the Redis Master to store a data that was changed during synchronization with its slave.

Check it current value:

[simterm]

root@bttrm-production-console:/home/admin# redis-cli -h 10.0.3.105 -p 6389 10.0.3.105:6389> config get client-output-buffer-limit 1) "client-output-buffer-limit" 2) "normal 0 0 0 slave 268435456 67108864 60 pubsub 33554432 8388608 60"

[/simterm]

The format here:

client-output-buffer-limit <class> <hard limit> <soft limit> <soft seconds>

And default values:

client-output-buffer-limitnormal 0 0 0client-output-buffer-limitslave 256mb 64mb 60client-output-buffer-limitpubsub 32mb 8mb 60

With such settings, a connection to a slave will be closed immediately if the <hard limit> – 256 MB – reached, or if the <soft limit> – 64 MB – exceeded over time from the <soft seconds> – 60 seconds.

For us – we need to increase values in the slave 268435456 67108864 60.

On the Master host set a new value, 512 MB:

[simterm]

10.0.3.105:6389> config set client-output-buffer-limit "slave 4194990176 4194990176 0" OK 10.0.3.105:6389> config get client-output-buffer-limit 1) "client-output-buffer-limit" 2) "normal 0 0 0 slave 4194990176 4194990176 0 pubsub 33554432 8388608 60"

[/simterm]

Actually, I had to increase the buffer up to the 512 MB, then 1 GB, and 2… and 4, 8 – and only with the 10 BG the service was able to finish the synchronization prcoess., although data in memory was only about 5 GB:

[simterm]

1911:M 17 Feb 20:30:21.308 * Slave 10.0.3.71:6389 asks for synchronization 1911:M 17 Feb 20:30:21.308 * Full resync requested by slave 10.0.3.71:6389 1911:M 17 Feb 20:30:21.308 * Starting BGSAVE for SYNC with target: disk 1911:M 17 Feb 20:30:21.389 * Background saving started by pid 8166 1911:M 17 Feb 20:30:21.851 * Slave 10.0.3.92:6389 asks for synchronization 1911:M 17 Feb 20:30:21.851 * Full resync requested by slave 10.0.3.92:6389 1911:M 17 Feb 20:30:21.851 * Waiting for end of BGSAVE for SYNC 8166:C 17 Feb 20:30:55.069 * DB saved on disk 8166:C 17 Feb 20:30:55.148 * RDB: 851 MB of memory used by copy-on-write 1911:M 17 Feb 20:30:55.365 * Background saving terminated with success 1911:M 17 Feb 20:31:05.160 * Synchronization with slave 10.0.3.92:6389 succeeded 1911:M 17 Feb 20:31:05.223 * Synchronization with slave 10.0.3.71:6389 succeeded

[/simterm]

And a slave’s log:

[simterm]

11347:S 17 Feb 20:29:06.523 * Connecting to MASTER 10.0.3.105:6389 11347:S 17 Feb 20:29:06.524 * MASTER <-> SLAVE sync started 11347:S 17 Feb 20:29:06.526 * Non blocking connect for SYNC fired the event. 11347:S 17 Feb 20:29:06.530 * Master replied to PING, replication can continue... 11347:S 17 Feb 20:29:06.532 * Partial resynchronization not possible (no cached master) 11347:S 17 Feb 20:29:06.612 * Full resync from master: e96dc8dcea06375e45f9e0796f796cb642b2a94a:243379789779 11347:S 17 Feb 20:29:36.120 * MASTER <-> SLAVE sync: receiving 1457381394 bytes from master 11347:S 17 Feb 20:29:43.069 # I/O error trying to sync with MASTER: connection lost 11347:S 17 Feb 20:29:43.191 * Connecting to MASTER 10.0.3.105:6389 11347:S 17 Feb 20:29:43.191 * MASTER <-> SLAVE sync started 11347:S 17 Feb 20:29:43.198 * Non blocking connect for SYNC fired the event. 11347:S 17 Feb 20:29:43.446 * Master replied to PING, replication can continue... 11347:S 17 Feb 20:29:43.450 * Partial resynchronization not possible (no cached master) 11347:S 17 Feb 20:29:43.532 * Full resync from master: e96dc8dcea06375e45f9e0796f796cb642b2a94a:247290941137 11347:S 17 Feb 20:30:17.763 * MASTER <-> SLAVE sync: receiving 1458314307 bytes from master 11347:S 17 Feb 20:30:20.516 # I/O error trying to sync with MASTER: connection lost 11347:S 17 Feb 20:30:21.303 * Connecting to MASTER 10.0.3.105:6389 11347:S 17 Feb 20:30:21.303 * MASTER <-> SLAVE sync started 11347:S 17 Feb 20:30:21.304 * Non blocking connect for SYNC fired the event. 11347:S 17 Feb 20:30:21.305 * Master replied to PING, replication can continue... 11347:S 17 Feb 20:30:21.307 * Partial resynchronization not possible (no cached master) 11347:S 17 Feb 20:30:21.389 * Full resync from master: e96dc8dcea06375e45f9e0796f796cb642b2a94a:251282993972 11347:S 17 Feb 20:30:55.365 * MASTER <-> SLAVE sync: receiving 1459112192 bytes from master 11347:S 17 Feb 20:31:05.299 * MASTER <-> SLAVE sync: Flushing old data 11347:S 17 Feb 20:31:20.802 * MASTER <-> SLAVE sync: Loading DB in memory 11347:S 17 Feb 20:31:41.073 * MASTER <-> SLAVE sync: Finished with success

[/simterm]

And now, when Production went back to live, let’s go deeper into the issue.

What’s going on?

Redis master-slave – the synchronization process explained

Let’s see steps on how the synchronization process is performed:

- a Slave on a start or after disconnect goes to a Master and ask to send him Master’s database

- a Master replies to this request and:

- creates a child process to create its database dump onto a filesystem as a

dump.rdbfile (see thefork()vsfork()vsclone()) - during this time Master will proceed its work with currently connected clients

- and all the data that was changed in its dataset during the dump creation will be saved to the replication buffer

- creates a child process to create its database dump onto a filesystem as a

- Master sends a notification that the dump is ready to the Slave and the Slave starts copying the dump over the network to save it on a disk of the Slave’s host

- the Slave finishes dump copy, loads it to its Redis instance memory and send a notification to the Master that the Slave is ready to serve clients

- Master in its turn checks its replication buffer and if there are any data – Master starts sending the changed data from the buffer to the Slave, so the Slave can replace the data in its memory with new, actualized, data from the Master

- the Slave applies those changes and starts its work

Thus, the replication process consists of two parts:

- at first, a full database copy is created, which may contain some outdated data (was changed during a dump creation and transferring)

- after that, a consistent update from a buffer is applied to the data on a Slave to make it the same as it is in a Master ‘s database

A database and buffer size

The first issue is that sometimes Redis needs in x3 memory comparing to its database size to create a dump, and the second – and the main issue – is that fact that while a dump will be created, and then transferred to a slave with all the network’s delays and will be saved to a Slave’s hard disk – the data on a Master can be changed.

So, during the dump transition – all such changes on a Master Redis instance will be stored in the Replication buffer. But once it will be exhausted – it will be purged and the synchronization process will start over. And over. And again.

In theory, the buffer’s size must be not less than a database’s size, although there is really little chance that all the data in the database will be updated during sync.

In any case, it’s all really rough estimations and you’ll have to test and monitor your real usage to get the real value for the buffer size.

But here is a couple of steps that can help you in such an investigation.

Check the current size of a database in a Master’s memory:

[simterm]

root@bttrm-production-console:/home/admin# redis-cli -h 10.0.3.105 -p 6389 info memory # Memory used_memory:871106648 used_memory_human:830.75M ...

[/simterm]

Create its dump:

[simterm]

root@bttrm-production-console:/home/admin# redis-cli -h 10.0.3.105 -p 6389 bgsave Background saving started

[/simterm]

Check the RDB (a dump’s) file size:

[simterm]

root@bttrm-production-console:/home/admin# ls -lh /var/lib/redis-cluster/dump.rdb -rw-rw---- 1 redis redis 345M Feb 20 15:04 /var/lib/redis-cluster/dump.rdb

[/simterm]

So, in memory we have 830 MB database, but in the configuration, we have memory limit up to the 50% of the hots’s memory:

[simterm]

root@bttrm-production-console:/home/admin# cat /etc/redis-cluster/redis-cluster.conf | grep maxmem maxmemory 50% maxmemory-policy allkeys-lru

[/simterm]

So, the database can grow up to the ~16 GB (the server has 32 GB mem in total), and after a dump will be created – its size will be about 7-8 GB.

Now, we need to get a rough estimation time of the network transfer process, and knowing the maximal file size to be transferred – let’s calculate the time needed to finish it.

To check the connection speed – the iperf tool can be used, install it on a Slave and Master:

[simterm]

root@bttrm-production-console:/home/admin# apt -y install iperf3

[/simterm]

On the Master host – run iperf in the server’s mode:

[simterm]

root@bttrm-production-console:/home/admin# iperf3 -s ----------------------------------------------------------- Server listening on 5201 ----------------------------------------------------------- ...

[/simterm]

Позже наткнулся на redis-cli --latency -h <Redis IP> -p <Redis port>

And run it from the Slave as a client:

[simterm]

root@bttrm-production-app-1:/home/admin# iperf3 -c 10.0.3.105 Connecting to host 10.0.3.105, port 5201 [ 4] local 10.0.3.71 port 47046 connected to 10.0.3.105 port 5201 [ ID] Interval Transfer Bandwidth Retr Cwnd [ 4] 0.00-1.00 sec 585 MBytes 4.90 Gbits/sec 0 1.37 MBytes ... [ 4] 9.00-10.00 sec 592 MBytes 4.97 Gbits/sec 0 2.06 MBytes - - - - - - - - - - - - - - - - - - - - - - - - - [ ID] Interval Transfer Bandwidth Retr [ 4] 0.00-10.00 sec 5.78 GBytes 4.96 Gbits/sec 0 sender [ 4] 0.00-10.00 sec 5.78 GBytes 4.96 Gbits/sec receiver iperf Done.

[/simterm]

4.90 Gbits/sec – 625 MBytes/sec.

Knowing the fact that the dump’s size will be about 7 GB we can use the calculator here>>> – 13 seconds to transfer it.

Now, using the --stat we can check how many keys are updated on the Master in one second:

[simterm]

root@bttrm-production-app-1:/home/admin# redis-cli -h 10.0.3.105 -p 6389 --stat ------- data ------ --------------------- load -------------------- - child - keys mem clients blocked requests connections 530178 765.42M 9 0 91699673 (+0) 2856563 530407 766.04M 16 0 91700194 (+521) 2856580 ...

[/simterm]

530178 – 530407 == 229 keys per second.

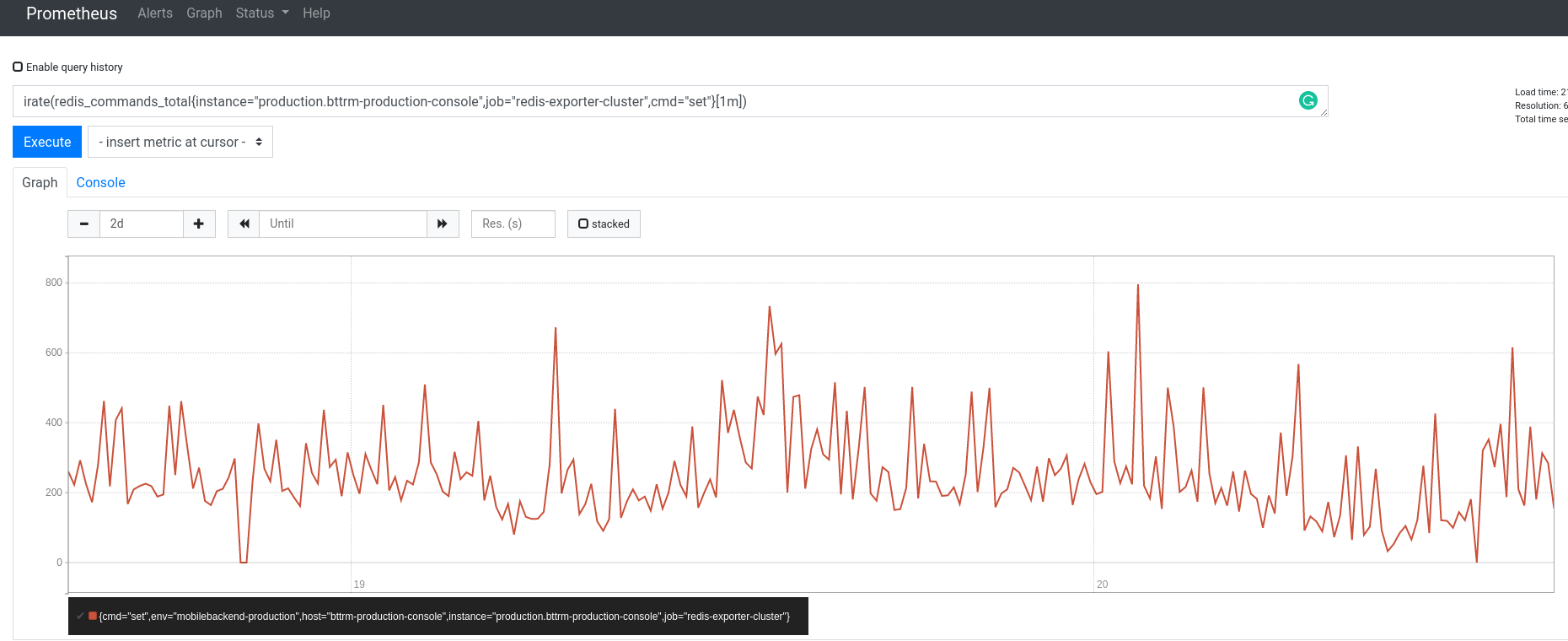

Or you can use monitoring tools like Prometheus and redis-exporter, and then select all the SET-requests as this are the main update’s requests:

irate(redis_commands_total{instance="production.bttrm-production-console",job="redis-exporter-cluster",cmd="set"}[1m])

And find keys size:

[simterm]

root@bttrm-production-app-1:/home/admin# redis-cli -h 10.0.3.105 -p 6389 --bigkeys ... [00.00%] Biggest string found so far 'projectname_8caf094272eac1c6a51213c1c878767b' with 45 bytes ... [00.00%] Biggest string found so far 'projectname_44bb0e2dd7f4fabf41ee09001791bdc4' with 4626843 bytes ... Biggest string found 'projectname_44bb0e2dd7f4fabf41ee09001791bdc4' has 4626843 bytes

[/simterm]

229 by the ~4.5 MB == around 1 GB changed data will be added to the buffer during those 13 seconds for the transfer.

But, again – this is very rough calculations as we are counting operations during a normal workload, while sometimes it can be in a few times bigger.

Useful links

- Redis Clients Handling

- Client Buffers-Before taking Redis in production check this!

- Top Redis Headaches for Devops – Replication Buffer

- Redis latency problems troubleshooting

- The Endless Redis Replication Loop: What, Why and How to Solve It (

slave-output-buffer-throttlingwasn’t added to Redis, but the post is really interesting) - 6 Crucial Redis Monitoring Metrics You Need To Watch

- How to collect Redis metrics

- How to Benchmark the Performance of a Redis Server

- Some interesting redis-cli commands and usages

![]()