The first part – Redis: replication, part 1 – overview. Replication vs Sharding. Sentinel vs Cluster. Redis topology.

The first part – Redis: replication, part 1 – overview. Replication vs Sharding. Sentinel vs Cluster. Redis topology.

The next part – Redis: replication, part 3 redis-py and work with Redis Sentinel from Python.

The whole story was started when we decided to get rid of memcached.

Currently, we have memcahced and Redis running on our backend servers.

And memcached, and Redis instances are working as standalone applications, i.e. they are not connected in any kind of replication and this leads to a problem:

- we have three backend hosts which are hosted behind an AWS Application Load Balancer

- an ALB has Sticky Sessions enabled but it’s working with cookies which are ignored by our mobile applications (iOS/Android)

- respectively, when a client makes a request to the backend – sometimes it can get cached data which already was removed/updated on another backend host in Redis or

memcached

We have this scheme since we migrated our backend application from an old infrastructure where the only one host was used and still had no time to update it, although it was in our planes a long time.

Currently, to solve these issues we have a bunch of “hacks” on the backend which makes additional checks to ensure data is up to date, and now to get rid of them we decided to:

- get rid of

memcachedat all as Redis can be used for the functions wherememcachedis used now - configure Redis replication over all hosts

Such a setup will be described in the post below.

The first example – with a basic Master-Slave replication and the second example – the Sentinel set up and configuration.

AWS EC2 instances with Debian 9 will be used here.

To work with Redis hosts three domain names will be used – redis-0.setevoy.org.ua for a master, redis-1.setevoy.org.ua and redis-2.setevoy.org.ua for its two slaves.

Slave in a minimal setup can be only one but as the second example here will be with the Sentinel – let’s have three from the beginning.

Contents

The basic Master-Slave replication

In this way, slaves will be a master’s read-only replicas keeping the same data which will be added to the master.

Master will send all data updates to its slaves – new keys expire, etc.

In case of the link between master and slave will be broken – a slave will try to reconnect to the master and make a partial synchronization to update data from a place where the previous sync was interrupted.

In case of such a partial sync is not possible – the slave will ask the master for a full synchronization and master will perform its data full snapshot which will be sent to this slave after this a usual sync will be restored.

A couple of notes here to keep in mind:

- one master can have multitype slaves

- slaves can accept connections from other slaves, making kind of “cascade” of a replicated nodes – a master on the top, a slave(s) in the middle and a slave(s) at the bottom

- it’s strongly recommended to enable data persistence on the master to avoid data loss – see the Safety of replication when master has persistence turned off

- slave will work in the read-only mode by default, see the Read-only slave

Redis Master configuration

Install Redis:

[simterm]

root@redis-0:/home/admin# apt -y install redis-server

[/simterm]

Edit a /etc/redis/redis.conf and in the bind set interfaces to listen on:

... bind 0.0.0.0 ...

You can specify multitype IPs here separated by spaces:

... bind 127.0.0.1 18.194.229.23 ...

Other valuable options here:

port 6379– clear enough but keep it in mindslave-read-only yes– slaves will be working in the read-only mode, doesn’t affect a master noderequirepass foobared– password for master authorizationappendonly yesandappendfilename "appendonly.aof"– decrease data loss chance, see the Redis Persistence

Restart the service:

[simterm]

root@redis-0:/home/admin# systemctl restart redis

[/simterm]

Check it using -a for the password:

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared ping PONG

[/simterm]

Check data replication status:

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared info replication # Replication role:master connected_slaves:0 master_repl_offset:0 repl_backlog_active:0 repl_backlog_size:1048576 repl_backlog_first_byte_offset:0 repl_backlog_histlen:0

[/simterm]

Add a new data:

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared set test 'test' OK

[/simterm]

Get it back:

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared get test "test"

[/simterm]

Okay – everything works here

Redis Slave configuration

On the two hosts left make a slaves configuration.

It will be the same for both – just repeat it.

Install Redis:

[simterm]

root@redis-1:/home/admin# apt -y install redis-server

[/simterm]

Edit the /etc/redis/redis.conf:

... slaveof redis-0.setevoy.org.ua 6379 ... masterauth foobared ... requirepass foobared ...

Here:

slaveof– set the master’s host and portmasterauth– master’s authrequirepass– auth on this replica

Restart the service:

[simterm]

root@redis-1:/home/admin# systemctl restart redis

[/simterm]

Check its status:

[simterm]

root@redis-1:/home/admin# redis-cli -a foobared info replication # Replication role:slave master_host:redis-0.setevoy.org.ua master_port:6379 master_link_status:up master_last_io_seconds_ago:5 master_sync_in_progress:0 ...

[/simterm]

Check log:

[simterm]

root@redis-1:/home/admin# tail -f /var/log/redis/redis-server.log 16961:S 29 Mar 10:54:36.263 * Connecting to MASTER redis-0.setevoy.org.ua:6379 16961:S 29 Mar 10:54:36.308 * MASTER <-> SLAVE sync started 16961:S 29 Mar 10:54:36.309 * Non blocking connect for SYNC fired the event. 16961:S 29 Mar 10:54:36.309 * Master replied to PING, replication can continue... 16961:S 29 Mar 10:54:36.310 * Partial resynchronization not possible (no cached master) 16961:S 29 Mar 10:54:36.311 * Full resync from master: 93585eeb7e32c0550c35f8d4935c9a18c4177ab9:1 16961:S 29 Mar 10:54:36.383 * MASTER <-> SLAVE sync: receiving 92 bytes from master 16961:S 29 Mar 10:54:36.383 * MASTER <-> SLAVE sync: Flushing old data 16961:S 29 Mar 10:54:36.383 * MASTER <-> SLAVE sync: Loading DB in memory 16961:S 29 Mar 10:54:36.383 * MASTER <-> SLAVE sync: Finished with success

[/simterm]

Connection to the master established, syncronization is done – okay, check the data:

[simterm]

root@redis-1:/home/admin# redis-cli -a foobared get test "test"

[/simterm]

Data present – all works here as well.

Changing Slave => Master roles

In case of the master will go down – you have to switch one of the slaves to become a new master.

If you’ll try to add any data on a current slave – Redis will rise an error as the slaves are in the read-only mode:

... slave-read-only yes ...

Try to add something:

[simterm]

root@redis-1:/home/admin# redis-cli -a foobared set test2 'test2' (error) READONLY You can't write against a read only slave.

[/simterm]

Now connect to the slave:

[simterm]

root@redis-1:/home/admin# redis-cli

[/simterm]

Authorize:

[simterm]

127.0.0.1:6379> auth foobared OK

[/simterm]

Disable the slave-role:

[simterm]

127.0.0.1:6379> slaveof no one OK

[/simterm]

Check its status now:

[simterm]

127.0.0.1:6379> info replication # Replication role:master connected_slaves:0 master_repl_offset:1989 repl_backlog_active:0 repl_backlog_size:1048576

[/simterm]

Add a new key one more time:

[simterm]

127.0.0.1:6379> set test2 'test2' OK

[/simterm]

And get it back:

[simterm]

127.0.0.1:6379> get test2 "test2"

[/simterm]

Keep in mind that as we did those changes in Redis node directly – after its restart it will become a slave again as it’s still is set in its /etc/redis/redis.conf file with the slaveof parameter.

Redis Sentinel

Now let’s add the Sentinel to our replication which will monitor Redis nodes and will do roles switches automatically.

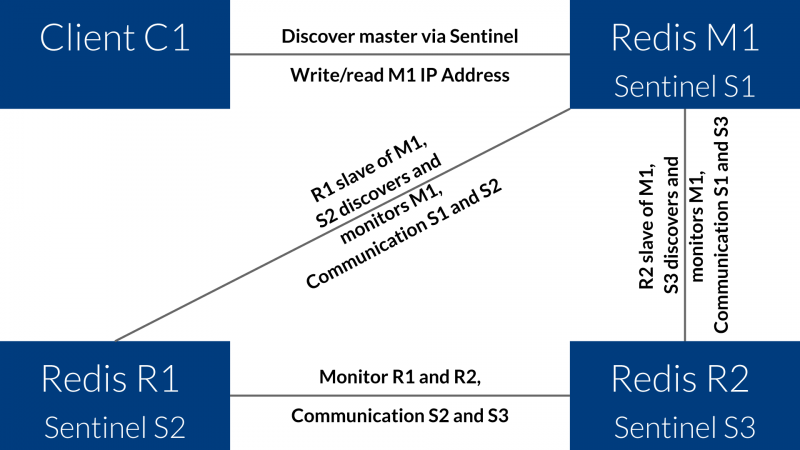

The overall scheme will be next:

Here:

- M1 = Master

- R1 = Replica 1 / Slave 1

- R2 = Replica 2 / Slave 2

- S1 = Sentinel 1

- S2 = Sentinel 2

- S3 = Sentinel 3

M1 and S1 – will be on the redis-0, R1 and S2 – on the redis-1, R2 and S3 – on the redis-2.

Running Sentinel

To run a Sentinel daemon the redis-server can be used just with a separate config – /etc/redis/sentinel.conf.

First, let’s create such config file on the Redis Master host:

sentinel monitor redis-test redis-0.setevoy.org.ua 6379 2 sentinel down-after-milliseconds redis-test 6001 sentinel failover-timeout redis-test 60000 sentinel parallel-syncs redis-test 1 bind 0.0.0.0 sentinel auth-pass redis-test foobared

Here:

monitor– the master-node address to be monitored, and the 2 is the Sentinel’s instances number to make a decisionsdown-after-milliseconds– time after which master will be considered as out of orderfailover-timeout– time to wait after changing slave=>master rolesparallel-syncs– number of simultaneous slaves synchronization after the master changed

Run it:

[simterm]

root@redis-0:/home/admin# redis-server /etc/redis/sentinel.conf --sentinel

10447:X 29 Mar 14:15:53.192 * Increased maximum number of open files to 10032 (it was originally set to 1024).

_._

_.-``__ ''-._

_.-`` `. `_. ''-._ Redis 3.2.6 (00000000/0) 64 bit

.-`` .-```. ```\/ _.,_ ''-._

( ' , .-` | `, ) Running in sentinel mode

|`-._`-...-` __...-.``-._|'` _.-'| Port: 26379

| `-._ `._ / _.-' | PID: 10447

`-._ `-._ `-./ _.-' _.-'

|`-._`-._ `-.__.-' _.-'_.-'|

| `-._`-._ _.-'_.-' | http://redis.io

`-._ `-._`-.__.-'_.-' _.-'

|`-._`-._ `-.__.-' _.-'_.-'|

| `-._`-._ _.-'_.-' |

`-._ `-._`-.__.-'_.-' _.-'

`-._ `-.__.-' _.-'

`-._ _.-'

`-.__.-'

10447:X 29 Mar 14:15:53.193 # WARNING: The TCP backlog setting of 511 cannot be enforced because /proc/sys/net/core/somaxconn is set to the lower value of 128.

10447:X 29 Mar 14:15:53.195 # Sentinel ID is e9fb72c8edb8ec2028e6ce820b9e72e56e07cf1e

10447:X 29 Mar 14:15:53.195 # +monitor master redis-test 35.158.154.25 6379 quorum 2

10447:X 29 Mar 14:15:53.196 * +slave slave 3.121.223.95:6379 3.121.223.95 6379 @ redis-test 35.158.154.25 6379

10447:X 29 Mar 14:16:43.402 * +slave slave 18.194.45.17:6379 18.194.45.17 6379 @ redis-test 35.158.154.25 6379

[/simterm]

Check Sentinel’s status using the 26379 port:

[simterm]

root@redis-0:/home/admin# redis-cli -p 26379 info sentinel # Sentinel sentinel_masters:1 sentinel_tilt:0 sentinel_running_scripts:0 sentinel_scripts_queue_length:0 sentinel_simulate_failure_flags:0 master0:name=redis-test,status=ok,address=35.158.154.25:6379,slaves=2,sentinels=1

[/simterm]

Here:

master0:name=redis-test,status=ok– master is UPslaves=2– it has two slavessentinels=1– only one Sentinel instance is running for now

You can get some basic information here, for example – the master’s IP:

[simterm]

root@redis-0:/home/admin# redis-cli -p 26379 sentinel get-master-addr-by-name redis-test 1) "35.158.154.25" 2) "6379"

[/simterm]

Now repeat Sentinel start on both slaves nodes using the same config as we did on the master and in the Sentinel’s log you must see new instances connected:

[simterm]

... 10447:X 29 Mar 14:18:40.437 * +sentinel sentinel fdc750c7d6388a6142d9e27b68172f5846e75d8c 172.31.36.239 26379 @ redis-test 35.158.154.25 6379 10447:X 29 Mar 14:18:42.725 * +sentinel sentinel ecddb26cd27c9a17c4251078c977761faa7a3250 172.31.35.218 26379 @ redis-test 35.158.154.25 6379 ...

[/simterm]

Check status again:

[simterm]

root@redis-0:/home/admin# redis-cli -p 26379 info sentinel # Sentinel sentinel_masters:1 sentinel_tilt:0 sentinel_running_scripts:0 sentinel_scripts_queue_length:0 sentinel_simulate_failure_flags:0 master0:name=redis-test,status=ok,address=18.194.229.23:6379,slaves=2,sentinels=3

[/simterm]

sentinels=3 – okay.

Also, Sentinel will perform its own settings updates when needed:

[simterm]

root@redis-1:/home/admin# cat /etc/redis/sentinel.conf sentinel myid fdc750c7d6388a6142d9e27b68172f5846e75d8c sentinel monitor redis-test 35.158.154.25 6379 2 sentinel down-after-milliseconds redis-test 6001 bind 0.0.0.0 sentinel failover-timeout redis-test 60000 # Generated by CONFIG REWRITE port 26379 dir "/home/admin" sentinel auth-pass redis-test foobared sentinel config-epoch redis-test 0 sentinel leader-epoch redis-test 0 sentinel known-slave redis-test 18.194.45.17 6379 sentinel known-slave redis-test 3.121.223.95 6379 sentinel known-sentinel redis-test 172.31.35.218 26379 ecddb26cd27c9a17c4251078c977761faa7a3250 sentinel known-sentinel redis-test 172.31.47.184 26379 e9fb72c8edb8ec2028e6ce820b9e72e56e07cf1e sentinel current-epoch 0

[/simterm]

Here is the sentinel myid fdc750c7d6388a6142d9e27b68172f5846e75d8c line added and the whole block after the #Generated by CONFIG REWRITE.

Redis Sentinel Automatic Failover

Now let’s check what will happen if the master will go down.

You can do it manually just by calling kill -9 or by using the redis-cli and sending the DEBUG command with a time in seconds to make a master “down” or by sending a signal to kill the master with.

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared DEBUG sleep 30

[/simterm]

The Sentinel’s log on the master:

[simterm]

... 10447:X 29 Mar 14:24:56.549 # +sdown master redis-test 35.158.154.25 6379 10447:X 29 Mar 14:24:56.614 # +new-epoch 1 10447:X 29 Mar 14:24:56.615 # +vote-for-leader ecddb26cd27c9a17c4251078c977761faa7a3250 1 10447:X 29 Mar 14:24:56.649 # +odown master redis-test 35.158.154.25 6379 #quorum 3/2 10447:X 29 Mar 14:24:56.649 # Next failover delay: I will not start a failover before Fri Mar 29 14:26:57 2019 10447:X 29 Mar 14:24:57.686 # +config-update-from sentinel ecddb26cd27c9a17c4251078c977761faa7a3250 172.31.35.218 26379 @ redis-test 35.158.154.25 6379 10447:X 29 Mar 14:24:57.686 # +switch-master redis-test 35.158.154.25 6379 3.121.223.95 6379 10447:X 29 Mar 14:24:57.686 * +slave slave 18.194.45.17:6379 18.194.45.17 6379 @ redis-test 3.121.223.95 6379 10447:X 29 Mar 14:24:57.686 * +slave slave 35.158.154.25:6379 35.158.154.25 6379 @ redis-test 3.121.223.95 6379 10447:X 29 Mar 14:25:03.724 # +sdown slave 35.158.154.25:6379 35.158.154.25 6379 @ redis-test 3.121.223.95 6379 ...

[/simterm]

Currently, we are interested in those two lines here:

[simterm]

... 10384:X 29 Mar 14:24:57.686 # +config-update-from sentinel ecddb26cd27c9a17c4251078c977761faa7a3250 172.31.35.218 26379 @ redis-test 35.158.154.25 6379 10384:X 29 Mar 14:24:57.686 # +switch-master redis-test 35.158.154.25 6379 3.121.223.95 6379 ...

[/simterm]

Sentinel performed the slave-to-master reconfiguration.

35.158.154.25 – is the old master which is dead now, and 3.121.223.95 is a new master, elected from the slaves – it’s running on the redis-1 host.

Try adding data here:

[simterm]

root@redis-1:/home/admin# redis-cli -a foobared set test3 'test3' OK

[/simterm]

While a similar attempt on the old master which became a slave now will lead to an error:

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared set test4 'test4' (error) READONLY You can't write against a read only slave.

[/simterm]

And let’s kill a node at all and see what Sentinel will do now:

[simterm]

root@redis-0:/home/admin# redis-cli -a foobared DEBUG SEGFAULT Error: Server closed the connection

[/simterm]

Log:

[simterm]

... 10447:X 29 Mar 14:26:21.897 * +reboot slave 35.158.154.25:6379 35.158.154.25 6379 @ redis-test 3.121.223.95 6379

[/simterm]

Well – Sentinel just restarted that node

Sentinel commands

| Command | Description |

|---|---|

sentinel masters |

list all masters and their statuses |

sentinel master |

one master’s status |

sentinel slaves |

list all slaves and their statuses |

sentinel sentinels |

list all Sentinel instances and their statuses |

sentinel failover |

run failover manually |

sentinel flushconfig |

force Sentinel to rewrite its configuration on disk |

sentinel monitor |

add a new master |

sentinel remove |

remove master from being watched |

Related links

- Redis Replication

- Пример файла настроек Sentinel

- Redis Sentinel — High Availability: Everything you need to know from DEV to PROD: Complete Guide

- Redis Sentinel: Make your dataset highly available

- How to run Redis Sentinel

![]()