Let’s continue with AWS CDK and Python. I’m not writing because I like CDK, but because there are quite a few examples on the Internet for AWS CDK with Python, so let them at least be here.

So, we have a cluster – AWS: CDK and Python – building an EKS cluster, and general impressions of CDK, we have a couple of controllers – AWS: CDK and Python – configure an IAM OIDC Provider, and install Kubernetes Controllers. As if everything is ready – I started installing a VictoriaMetrics chart, and everything was working except for the pod with VMSingle, which hung in the Pending status.

Contents

“VolumeBinding”: binding volumes: timed out waiting for the condition

Let’s check the Events of this Pod:

[simterm]

... Events: Type Reason Age From Message ---- ------ ---- ---- ------- Warning FailedScheduling 10m default-scheduler running PreBind plugin "VolumeBinding": binding volumes: timed out waiting for the condition

[/simterm]

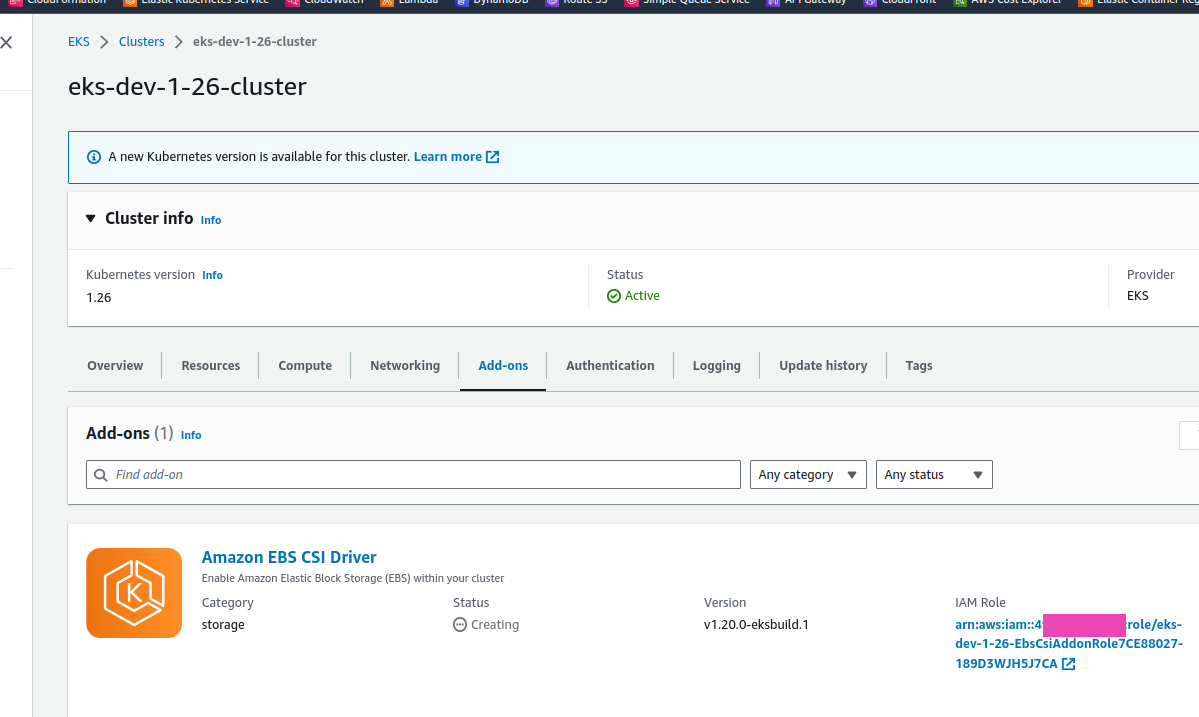

Quick googling led me to a question on StackOverflow, where I recalled about EKS Add-ons, in particular, about the EBS CSI diver, which should create EBS when a PersistentVolumeClaim appears.

So today we’ll look at how to add add-ons to a cluster with the AWS CDK.

Actually, it’s is quite simple, the only thing I had to google was how to use the CfnAddon, but this time the documentation was found quickly, and even with examples in Python, not TypeScript.

IAM Role for EBS CSI driver

We already have OIDC Provider, see AWS: EKS, OpenID Connect, and ServiceAccounts

For the driver, we also will use IRSA. So we need to describe a ServiceAccount, and attach an AWS Managed Policy with the iam.ManagedPolicy.from_aws_managed_policy_name():

...

# Create an IAM Role to be assumed by ExternalDNS

ebs_csi_addon_role = iam.Role(

self,

'EbsCsiAddonRole',

# for Role's Trust relationships

assumed_by=iam.FederatedPrincipal(

federated=oidc_provider_arn,

conditions={

'StringEquals': {

f'{oidc_provider_url.replace("https://", "")}:sub': 'system:serviceaccount:kube-system:ebs-csi-controller-sa'

}

},

assume_role_action='sts:AssumeRoleWithWebIdentity'

)

)

ebs_csi_addon_role.add_managed_policy(iam.ManagedPolicy.from_aws_managed_policy_name("service-role/AmazonEBSCSIDriverPolicy"))

...

In the from_aws_managed_policy_name specify the name as “service-role/ManagedPolicyName“.

CfnAddon for EBS CSI driver

Find a current version of the diver by specifying the version of the cluster – we have EKS version 1.26, because the CDK still does not support 1.27:

[simterm]

$ aws eks describe-addon-versions --addon-name aws-ebs-csi-driver --kubernetes-version 1.26 --query "addons[].addonVersions[].[addonVersion, compatibilities[].defaultVersion]" --output text v1.20.0-eksbuild.1 True ...

[/simterm]

And describe the connection of the add-on itself with the CfnAddon – specify the cluster name, version, and ServiceAccount’s IAM Role ARN taken from the ebs_csi_addon_role created above::

...

# Add EBS CSI add-on

ebs_csi_addon = eks.CfnAddon(

self,

"EbsCsiAddonSa",

addon_name="aws-ebs-csi-driver",

cluster_name=cluster_name,

resolve_conflicts="OVERWRITE",

addon_version="v1.20.0-eksbuild.1",

service_account_role_arn=ebs_csi_addon_role.role_arn

)

...

Deploy, and check:

Check Pods:

[simterm]

$ kk -n kube-system get pod | grep csi ebs-csi-controller-896d87c6b-7rv9z 6/6 Running 0 9m59s ebs-csi-controller-896d87c6b-v7xg7 6/6 Running 0 9m59s ebs-csi-node-2zwnr 3/3 Running 0 9m59s ebs-csi-node-pt5zs 3/3 Running 0 9m59s

[/simterm]

And now we have a PVC for VictoriaMetrcis in the Bound status:

[simterm]

$ kk -n dev-monitoring-ns get pvc NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE vmsingle-victoria-metrics-k8s-stack Bound pvc-151a631b-f6de-4567-8baa-97adb4e04a87 20Gi RWO gp2 91m

[/simterm]

And the VMSingle Pod now in the Running status:

[simterm]

$ kk -n dev-monitoring-ns get po | grep vmsingle vmsingle-victoria-metrics-k8s-stack-f7794d779-n6sc7 1/1 Running 0 28m

[/simterm]

Done.

![]()