We have an AWS Elastic Kubernetes Service cluster, which has a few WorkerNode Groups that were created as AWS AutoScaling Groups by using the

We have an AWS Elastic Kubernetes Service cluster, which has a few WorkerNode Groups that were created as AWS AutoScaling Groups by using the eksctl, see the AWS Elastic Kubernetes Service: a cluster creation automation, part 2 – Ansible, eksctl for more details.

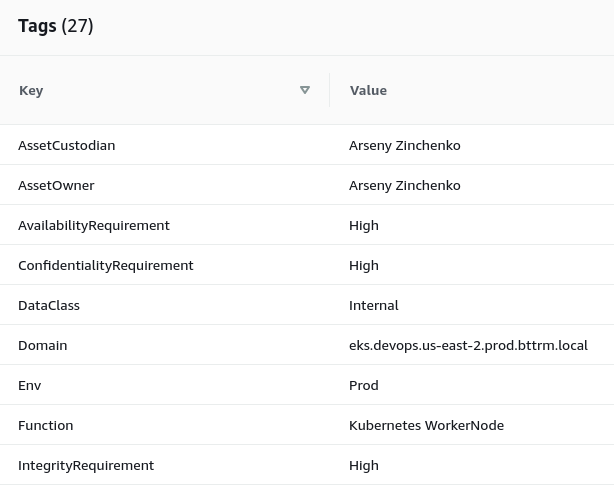

The WorkerNode Group configuration for the eksctl keeps a set of Tags, that are used by our team for the AWS inventory:

---

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: "{{ eks_cluster_name }}"

region: "{{ region }}"

version: "{{ k8s_version }}"

nodeGroups:

### Common ###

- name: "{{ k8s_common_nodegroup_name }}-{{ item }}-v2021-09"

instanceType: "{{ k8s_common_nodegroup_instance_type }}"

privateNetworking: true

labels:

role: common-workers

...

tags:

Tier: "Devops"

Domain: "eks.devops.{{ region }}.{{ env | lower }}.bttrm.local"

ServiceType: "EC2"

Env: {{ env }}

Function: "Kubernetes WorkerNode"

NetworkType: "Private"

DataClass: "Public"

AssetOwner: "{{ asset_owner }}"

AssetCustodian: "{{ asset_custodian }}"

OperatingSystem: "Amazon Linux"

JiraTicket: "{{ jira_ticket }}"

ConfidentialityRequirement: "Med"

IntegrityRequirement: "Med"

AvailabilityRequirement: "Med"

...

And those tags are applied to AutoScale Groups:

And then are applied to EC2 instances, created from this AutoScale Group.

The problem here is the fact, that these tags are not copied to Elastic Block Store devices, attached to this EC2.

Also, besides Kubernetes WorkerNodes, in our AWS account, we have common EC2 instances, and some of the instances can have only one, root device, while others can have some additional disks for data to backups.

In addition, we need not only copy tags from its EC2, but I also want to add a dedicated tag, describing a disk’s function – Root Volume, Data Volume или Kubernetes PVC Volume.

How we can do it? Well, like almost anything in AWS, that are not covered by the AWS Console – by using the AWS Lambda service: let’s create a function, that will be triggered when a new EC2 is launched and will copy this EC2’s Tags to all EBS volumes, attached to this instance.

So, what do we need to out in the logic of this AWS Lambda function:

- when a new EC2 is created – trigger a Lambda function

- the function will take an EC2 ID, and will find all related EBS volumes

- will copy AWS Tags from this EC2 to all its EBS

- will add a new Tag named Role:

- if an EBS was created from a Kubernetes PVC and is mounted to an EC2, launched from a Kubernetes WorkerNode AWS AutoScale Group, then we will set the tag

Role: "PvcVolume" - if an EBS was created during a common EC2 creation, then will check its mount point, and will decide which value to use –

Role: "RootVolume", orRole: "DataVolume"

- if an EBS was created from a Kubernetes PVC and is mounted to an EC2, launched from a Kubernetes WorkerNode AWS AutoScale Group, then we will set the tag

Let’s go.

Contents

Python script: copy AWS Tags

At first, let’s write a Pythion script, test it, and will go to the AWS Lambda, in the second part of this post.

When we will adapt for a Lambda function, we will make a quick update on it to make it available to use a dedicated EC2 ID instead.

boto3: getting a list of EC2 instances and their EBS volumes

The very first thing is to authenticate in an AWS account, get all EC2 instances in a specific region, and then get a list of EBS volumes attached to each EC2.

Then, having this information, we can play with their Tags.

The script:

#!/usr/bin/env python

import os

import boto3

ec2 = boto3.resource('ec2',

region_name=os.getenv("AWS_DEFAULT_REGION"),

aws_access_key_id=os.getenv("AWS_ACCESS_KEY_ID"),

aws_secret_access_key=os.getenv("AWS_SECRET_ACCESS_KEY")

)

def lambda_handler(event, context):

base = ec2.instances.all()

for instance in base:

print("\n[DEBUG] EC2\n\t\tID: " + str(instance))

print("\tEBS")

for vol in instance.volumes.all():

vol_id = str(vol)

print("\t\tID: " + vol_id)

if __name__ == "__main__":

lambda_handler(0, 0)

Here, in the ec2 variable we are creating an object of the boto3.resource with the ec2 type, and will authenticate in an AWS account by using the $AWS_ACCESS_KEY_ID and $AWS_SECRET_ACCESS_KEY variables. Later, in our Lambda, authentication, and authorization will be done with an IAWS IAM Role.

At the end of the script, we are calling the lambda_handler() function, if the script was executed as a dedicated program, see the Python: what is the if __name__ == “__main__” ? for details.

In the lambda_handler() we are calling the ec2.instances.all() method to get all instances in a region, and then in the for loop for every EC2 by calling the instance.volumes.all() we are getting a list of EBS volumes attached to this EC2.

For now, arguments to the lambda_handler() are passed as “0, 0“, and later, in Lambda, we will put the event and context objects.

Set the AWS authentication variables:

[simterm]

$ export AWS_ACCESS_KEY_ID=AKI***D4Q $ export AWS_SECRET_ACCESS_KEY=QUC***BTI $ export AWS_DEFAULT_REGION=eu-west-3

[/simterm]

Run the script:

[simterm]

$ ./ec2_tags.py

[DEBUG] EC2

ID: ec2.Instance(id='i-0df2fe9ec4b5e1855')

EBS

ID: ec2.Volume(id='vol-0d11fd27f3702a0fc')

[DEBUG] EC2

ID: ec2.Instance(id='i-023529a843d02f680')

EBS

ID: ec2.Volume(id='vol-0f3548ae321cd040c')

[DEBUG] EC2

ID: ec2.Instance(id='i-02ab1438a79a3e475')

EBS

ID: ec2.Volume(id='vol-09b6f60396e56c363')

ID: ec2.Volume(id='vol-0d75c44a594e312a1')

...

[/simterm]

Good, it’s working! We’ve got all ЕС2 in the eu-west-3 AWS Region, and for every EC2 got a list of its EBS attached.

What’s next? The next is to determine how is a volume mounted in an EC2 and knowing its mount point we’ll be able to know if this disc is a root volume or some additional volume for data.

This can be done by getting the attachments() attribute that keeps a value for the Device key.

Set a new variable in the script called device_id:

...

for vol in instance.volumes.all():

vol_id = str(vol)

device_id = "ec2.vol.Device('" + str(vol.attachments[0]['Device']) + "')"

print("\t\tID: " + vol_id + "\n\t\tDev: " + device_id + "\n")

...

Run the script again:

[simterm]

$ ./ec2_tags.py

[DEBUG] EC2

ID: ec2.Instance(id='i-0df2fe9ec4b5e1855')

EBS

ID: ec2.Volume(id='vol-0d11fd27f3702a0fc')

Dev: ec2.vol.Device('/dev/xvda')

[DEBUG] EC2

ID: ec2.Instance(id='i-023529a843d02f680')

EBS

ID: ec2.Volume(id='vol-0f3548ae321cd040c')

Dev: ec2.vol.Device('/dev/xvda')

[DEBUG] EC2

ID: ec2.Instance(id='i-02ab1438a79a3e475')

EBS

ID: ec2.Volume(id='vol-09b6f60396e56c363')

Dev: ec2.vol.Device('/dev/xvda')

ID: ec2.Volume(id='vol-0d75c44a594e312a1')

Dev: ec2.vol.Device('/dev/xvdbm')

...

[/simterm]

And here we’ve got an ЕС2 with ID i-02ab1438a79a3e475, and this EC2 has two EBS volumes mounted – vol-09b6f60396e56c363 as /dev/xvda, and vol-0d75c44a594e312a1 as /dev/xvdbm.

/dev/xvda obviously is a root volume, and /dev/xvdbm – some additional data.

boto3: adding AWS Tags to an EBS

Now, let’s create a Role Tag that will keep one from the following values:

- if an EBS has a Tag with the

kubernetes.io/created-for/pvc/namekey, then will setRole: "PvcVolume" - if it is not a PVC volume, then need to check its mount point, and if

device== “/dev/xvda“, then setRole: "RootVolume" - and finally, if the

devicevariable has any other value, then just mark the EBS with theRole: "DataDisk"

To do this, let’s add another function that will be used to set Tags, and another small function called is_pvc(), that will check if an EBS has the kubernetes.io/created-for/pvc/name Tag:

...

def is_pvc(vol):

try:

for tag in vol.tags:

if tag['Key'] == 'kubernetes.io/created-for/pvc/name':

return True

break

except TypeError:

return False

def set_role_tag(vol):

device = vol.attachments[0]['Device']

tags_list = []

values = {}

if is_pvc(vol):

values['Key'] = "Role"

values['Value'] = "PvcDisk"

tags_list.append(values)

elif device == "/dev/xvda":

values['Key'] = "Role"

values['Value'] = "RootDisk"

tags_list.append(values)

else:

values['Key'] = "Role"

values['Value'] = "DataDisk"

tags_list.append(values)

return tags_list

...

Here, in the set_role_tag() the function we at first are passing a value of the vol as an argument to the is_pvc() function, that checks Tags for the ‘kubernetes.io/created-for/pvc/name’ key. The try/except here is used to know if an EBS has tags at all.

If the ‘kubernetes.io/created-for/pvc/name’ tag was found, then is_pvc() will return True, if not found, then False.

Then, in the if/elif/else conditions we are checking if an EBS is a PVC volume, and if so, then it will be tagged as Role: "PvcVolume", if not – will check its mount point, and if it is mounted as “/dev/xvda“, then set the Role: "RootVolume", if to – will use the Role: "DataDisk".

Add the set_role_tag() call to the lambda_handler() as an argument to the vol.create_tags() function:

...

def lambda_handler(event, context):

base = ec2.instances.all()

for instance in base:

print("\n[DEBUG] EC2\n\t\tID: " + str(instance))

print("\tEBS")

for vol in instance.volumes.all():

vol_id = str(vol)

device_id = "ec2.vol.Device('" + str(vol.attachments[0]['Device']) + "')"

print("\t\tID: " + vol_id + "\n\t\tDev: " + device_id)

role_tag = vol.create_tags(Tags=set_role_tag(vol))

print("\t\tTags set:\n\t\t\t" + str(role_tag))

...

Run the script again:

[simterm]

$ ./ec2_tags.py

[DEBUG] EC2

ID: ec2.Instance(id='i-0df2fe9ec4b5e1855')

EBS

ID: ec2.Volume(id='vol-0d11fd27f3702a0fc')

Dev: ec2.vol.Device('/dev/xvda')

Tags set:

[ec2.Tag(resource_id='vol-0d11fd27f3702a0fc', key='Role', value='RootDisk')]

...

[DEBUG] EC2

ID: ec2.Instance(id='i-02ab1438a79a3e475')

EBS

ID: ec2.Volume(id='vol-09b6f60396e56c363')

Dev: ec2.vol.Device('/dev/xvda')

Tags set:

[ec2.Tag(resource_id='vol-09b6f60396e56c363', key='Role', value='RootDisk')]

ID: ec2.Volume(id='vol-0d75c44a594e312a1')

Dev: ec2.vol.Device('/dev/xvdbm')

Tags set:

[ec2.Tag(resource_id='vol-0d75c44a594e312a1', key='Role', value='PvcDisk')]

[/simterm]

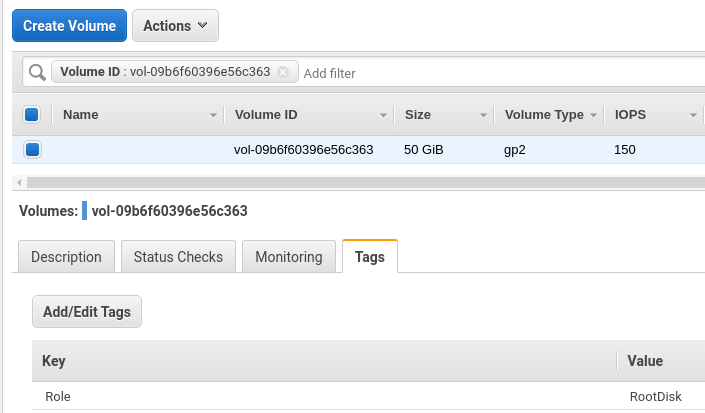

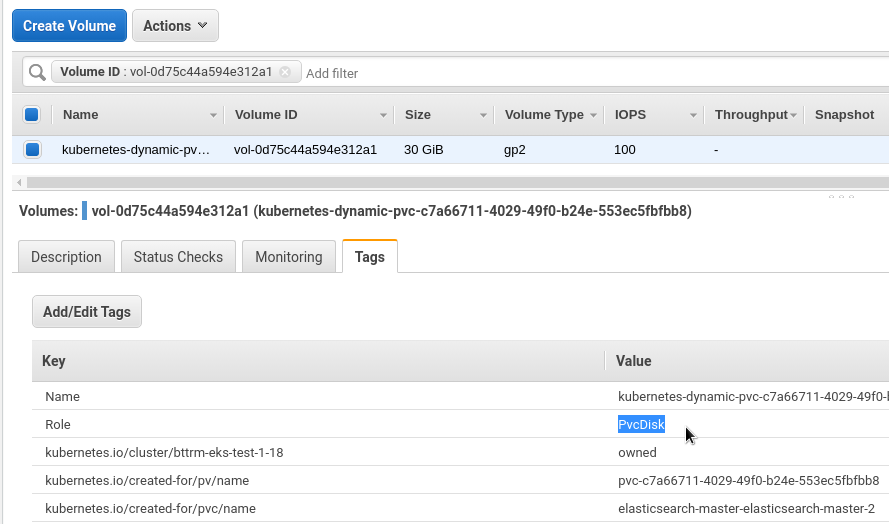

Let’s check volumes of the i-02ab1438a79a3e47 EC2 instance.

Its root volume vol-09b6f60396e56c363:

And a Kubernetes PVC – vol-0d75c44a594e312a1:

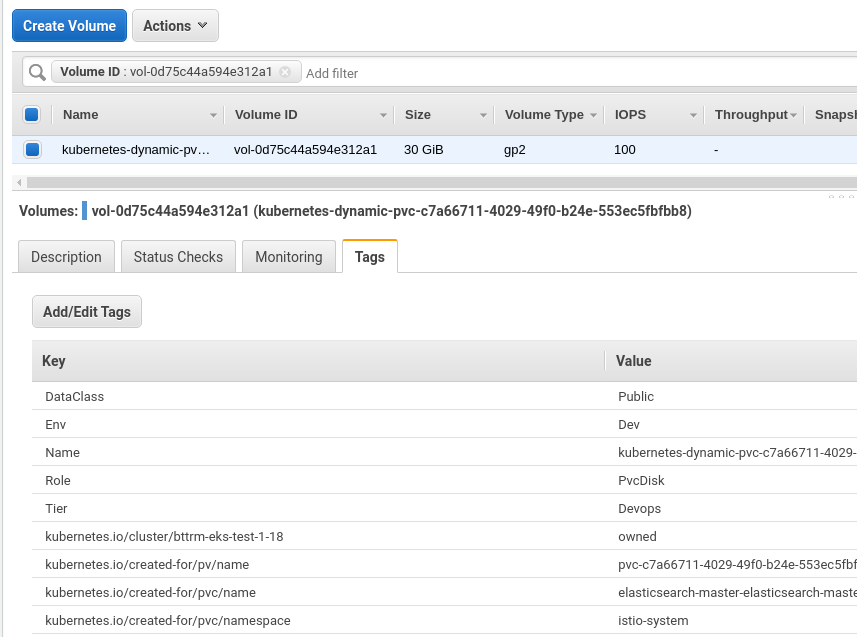

Good, we’ve added the Role tag creation, and now need to add an ability to copy AWS Tags from the EC2 to its EBS.

boto3: copy AWS Tags from an EC2 to its EBS

Tags copy can be moved to a dedicated function too, let’s name it copy_ec2_tags(), and it will accept an argument, where we will pass an EC2 ID:

...

def copy_ec2_tags(instance):

tags_list = []

values = {}

for instance_tag in instance.tags:

if instance_tag['Key'] == 'Env':

tags_list.append(instance_tag)

elif instance_tag['Key'] == 'Tier':

tags_list.append(instance_tag)

elif instance_tag['Key'] == 'DataClass':

tags_list.append(instance_tag)

return tags_list

...

In the function, in a loop, we are checking all tags of the instance, and if will find any of the three tags specified in our function, they will be appended to the list tags_list[], that later will be passed to the vol.create_tags().

Add copy_ec2_tags() execution to the lambda_handler():

...

def lambda_handler(event, context):

base = ec2.instances.all()

for instance in base:

print("\n[DEBUG] EC2\n\t\tID: " + str(instance))

print("\tEBS")

for vol in instance.volumes.all():

vol_id = str(vol)

device_id = "ec2.vol.Device('" + str(vol.attachments[0]['Device']) + "')"

print("\t\tID: " + vol_id + "\n\t\tDev: " + device_id)

role_tag = vol.create_tags(Tags=set_role_tag(vol))

ec2_tags = vol.create_tags(Tags=copy_ec2_tags(instance))

print("\t\tTags set:\n\t\t\t" + str(role_tag) + "\n\t\t\t" + str(ec2_tags))

...

Run:

[simterm]

$ ./ec2_tags.py

[DEBUG] EC2

ID: ec2.Instance(id='i-0df2fe9ec4b5e1855')

EBS

ID: ec2.Volume(id='vol-0d11fd27f3702a0fc')

Dev: ec2.vol.Device('/dev/xvda')

Tags set:

[ec2.Tag(resource_id='vol-0d11fd27f3702a0fc', key='Role', value='RootDisk')]

[ec2.Tag(resource_id='vol-0d11fd27f3702a0fc', key='DataClass', value='Public'), ec2.Tag(resource_id='vol-0d11fd27f3702a0fc', key='Env', value='Dev'), ec2.Tag(resource_id='vol-0d11fd27f3702a0fc', key='Tier', value='Devops')]

...

[DEBUG] EC2

ID: ec2.Instance(id='i-02ab1438a79a3e475')

EBS

ID: ec2.Volume(id='vol-09b6f60396e56c363')

Dev: ec2.vol.Device('/dev/xvda')

Tags set:

[ec2.Tag(resource_id='vol-09b6f60396e56c363', key='Role', value='RootDisk')]

[ec2.Tag(resource_id='vol-09b6f60396e56c363', key='Env', value='Dev'), ec2.Tag(resource_id='vol-09b6f60396e56c363', key='DataClass', value='Public'), ec2.Tag(resource_id='vol-09b6f60396e56c363', key='Tier', value='Devops')]

ID: ec2.Volume(id='vol-0d75c44a594e312a1')

Dev: ec2.vol.Device('/dev/xvdbm')

Tags set:

[ec2.Tag(resource_id='vol-0d75c44a594e312a1', key='Role', value='PvcDisk')]

[ec2.Tag(resource_id='vol-0d75c44a594e312a1', key='Env', value='Dev'), ec2.Tag(resource_id='vol-0d75c44a594e312a1', key='DataClass', value='Public'), ec2.Tag(resource_id='vol-0d75c44a594e312a1', key='Tier', value='Devops')]

...

[/simterm]

And check:

The whole script now looks like next::

#!/usr/bin/env python

import os

import boto3

ec2 = boto3.resource('ec2',

region_name=os.getenv("AWS_DEFAULT_REGION"),

aws_access_key_id=os.getenv("AWS_ACCESS_KEY_ID"),

aws_secret_access_key=os.getenv("AWS_SECRET_ACCESS_KEY")

)

def lambda_handler(event, context):

base = ec2.instances.all()

for instance in base:

print("[DEBUG] EC2\n\t\tID: " + str(instance))

print("\tEBS")

for vol in instance.volumes.all():

vol_id = str(vol)

device_id = "ec2.vol.Device('" + str(vol.attachments[0]['Device']) + "')"

print("\t\tID: " + vol_id + "\n\t\tDev: " + device_id)

role_tag = vol.create_tags(Tags=set_role_tag(vol))

ec2_tags = vol.create_tags(Tags=copy_ec2_tags(instance))

print("\t\tTags set:\n\t\t\t" + str(role_tag) + "\n\t\t\t" + str(ec2_tags) + "\n")

def is_pvc(vol):

try:

for tag in vol.tags:

if tag['Key'] == 'kubernetes.io/created-for/pvc/name':

return True

break

except TypeError:

return False

def set_role_tag(vol):

device = vol.attachments[0]['Device']

tags_list = []

values = {}

if is_pvc(vol):

values['Key'] = "Role"

values['Value'] = "PvcDisk"

tags_list.append(values)

elif device == "/dev/xvda":

values['Key'] = "Role"

values['Value'] = "RootDisk"

tags_list.append(values)

else:

values['Key'] = "Role"

values['Value'] = "DataDisk"

tags_list.append(values)

return tags_list

def copy_ec2_tags(instance):

tags_list = []

values = {}

for instance_tag in instance.tags:

if instance_tag['Key'] == 'Env':

tags_list.append(instance_tag)

elif instance_tag['Key'] == 'Tier':

tags_list.append(instance_tag)

elif instance_tag['Key'] == 'DataClass':

tags_list.append(instance_tag)

elif instance_tag['Key'] == 'JiraTicket':

tags_list.append(instance_tag)

return tags_list

if __name__ == "__main__":

lambda_handler(0, 0)

And we are done here, and now can proceed with an AWS Lambda function. See the next part in the AWS: Lambda – copy EC2 tags to its EBS, part 2 – create a Lambda function post.

![]()