An application, cluster, or repository can be created In ArgoCD from its WebUI, CLI, or by writing a Kubernetes manifest that then can be passed to

An application, cluster, or repository can be created In ArgoCD from its WebUI, CLI, or by writing a Kubernetes manifest that then can be passed to kubectl to create resources.

For example, Applications are Kubernetes CustomResources and described in Kubernetes CRD applications.argoproj.io:

[simterm]

$ kubectl get crd applications.argoproj.io NAME CREATED AT applications.argoproj.io 2020-11-27T15:55:29Z

[/simterm]

And are accessible in ArgoCD’s namespaces as common Kubernetes resources:

[simterm]

$ kubectl -n dev-1-18-devops-argocd-ns get applications NAME SYNC STATUS HEALTH STATUS backend-app OutOfSync Missing dev-1-18-web-payment-service-ns Synced Healthy web-fe-github-actions Synced Healthy

[/simterm]

Such an approach would give an ability to create all necessary applications when creating a new ArgoCD instance. For example, when we are upgrading the Kubernetes version on our AWS Elastic Kubernetes Service, we are creating a new cluster with Jenkins, Ansible, and Helm (check AWS Elastic Kubernetes Service: a cluster creation automation, part 1 – CloudFormation and AWS Elastic Kubernetes Service: a cluster creation automation, part 2 – Ansible, eksctl for more details). where we have all necessary controllers and operators installed, including ArgoCD.

By using the Declarative approach for ArgoCD, we also can create all applications and other settings configured during a new EKS cluster provisioning.

So, our task, for now, is to create:

- Projects with roles and permissions for namespaces Backend and Web teams

- Applications – for our Backend and Web projects

- Repositories – set up authentication for Github repositories

At first, let’s see how it’s working in general by creating manifest and resources manually, and then will apply this to the Ansible role and will create a Jenkins job.

Contents

Projects

Documentation – Projects.

We need to have two projects for two of our teams – Backend, and Wb, and each needs to have limits for used Namespaces and must have a role configured for access to applications in those projects.

Create a new file with the Backend’s Project:

apiVersion: argoproj.io/v1alpha1

kind: AppProject

metadata:

name: backend

namespace: dev-1-18-devops-argocd-ns

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

description: "Backend project"

sourceRepos:

- '*'

destinations:

- namespace: 'dev-1-18-backend-*'

server: https://kubernetes.default.svc

clusterResourceWhitelist:

- group: ''

kind: Namespace

namespaceResourceWhitelist:

- group: "*"

kind: "*"

roles:

- name: "backend-app-admin"

description: "Backend team's deployment role"

policies:

- p, proj:backend:backend-app-admin, applications, *, backend/*, allow

groups:

- "Backend"

orphanedResources:

warn: true

Here:

sourceRepos: allow deploying to the project from any repositoriesdestinations: a cluster and namespaces in it that are allowed to deploy to. In the example above we are using the dev-1-18-backend-* mask, so the Backend team will be allowed to deploy to any namespace started from the mask, and for the Web project we will use a similar mask dev-1-18-web-*clusterResourceWhitelist: on a cluster level allow creating Namespaces onlynamespaceResourceWhitelist: on Namespaces level allow creating any resourcesroles: create a backend-app-admin role with full access to applications in this project. See more at ArgoCD: users, access, and RBAC and ArgoCD: Okta integration, and user groupsorphanedResources: enable notifications about stale resources, check the Orphaned Resources Monitoring

Deploy it:

[simterm]

$ kubectl apply -f project.yaml appproject.argoproj.io/backend created

[/simterm]

And check:

[simterm]

$ argocd proj get backend Name: backend Description: Backend project Destinations: <none> Repositories: * Whitelisted Cluster Resources: /Namespace Blacklisted Namespaced Resources: <none> Signature keys: <none> Orphaned Resources: enabled (warn=true)

[/simterm]

Applications

Documentation – Applications.

Next, let’s add a manifest for a test application in the Backend project:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: backend-app

namespace: dev-1-18-devops-argocd-ns

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

project: "backend"

source:

repoURL: https://github.com/projectname/devops-kubernetes.git

targetRevision: DVPS-967-ArgoCD-declarative-bootstrap

path: tests/test-svc

helm:

parameters:

- name: serviceType

value: LoadBalancer

destination:

server: https://kubernetes.default.svc

namespace: dev-1-18-backend-argocdapp-test-ns

syncPolicy:

automated:

prune: false

selfHeal: false

allowEmpty: false

syncOptions:

- Validate=true

- CreateNamespace=true

- PrunePropagationPolicy=foreground

- PruneLast=true

retry:

limit: 5

backoff:

duration: 5s

factor: 2

maxDuration: 3m

Here:

finalizers: set to delete all Kubernetes resources when deleting the application from ArgoCD (cascade deletion, see App Deletion)project: a project to add the application to and which will set limits for the application (namespaces, resources, etc)source:set a repository to deploy from, and a branch- в

helm.parameters– set values for a Helm chart (helm --set)

- в

destination: a cluster and namespace to deploy to. The Namespace here must be in allowed in the Project that the application belongs tosyncPolicy: synchronization settings, check Automated Sync Policy and Sync Options

Also, ArgoCD supports the so-called App of Apps, when you are creating a manifest for an application that will create a set of other applications. We will not use it 9yet), but looks interesting, see the Cluster Bootstrapping.

Deploy the application:

[simterm]

$ kubectl apply -f application.yaml application.argoproj.io/backend-app created

[/simterm]

Check it:

[simterm]

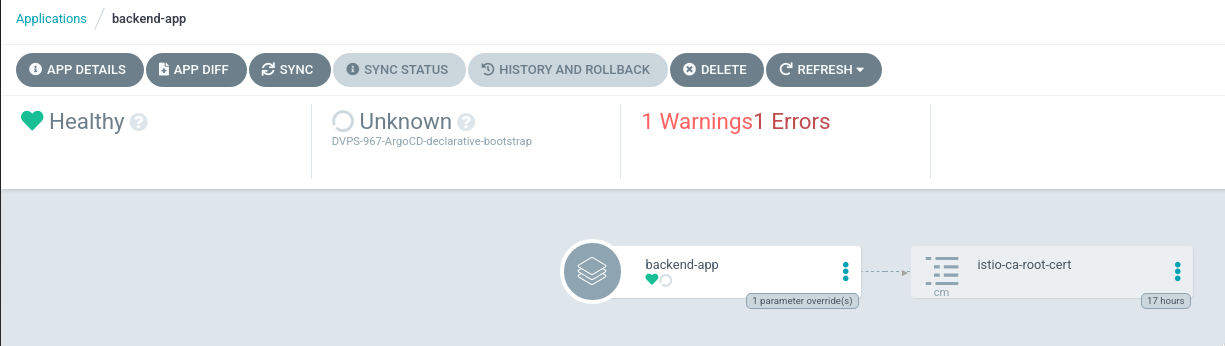

$ kubectl -n dev-1-18-devops-argocd-ns get application backend-app NAME SYNC STATUS HEALTH STATUS backend-app Unknown Healthy

[/simterm]

And its status:

[simterm]

$ argocd app get backend-app Name: backend-app Project: backend Server: https://kubernetes.default.svc Namespace: dev-1-18-backend-argocdapp-test-ns URL: https://dev-1-18.argocd.example.com/applications/backend-app Repo: https://github.com/projectname/devops-kubernetes.git Target: DVPS-967-ArgoCD-declarative-bootstrap Path: tests/test-svc SyncWindow: Sync Allowed Sync Policy: Automated Sync Status: Unknown Health Status: Healthy CONDITION MESSAGE LAST TRANSITION ComparisonError rpc error: code = Unknown desc = authentication required 2021-05-18 09:11:13 +0300 EEST OrphanedResourceWarning Application has 1 orphaned resources 2021-05-18 09:11:13 +0300 EEST

Here, we have the “ComparisonError = authentication required“, as ArgoCD can not connect to the repository because it is private.

Let’s go to the repository authentication.

Repositories

Documentation – Repositories.

Suddenly, repositories are added to the argocd-cm ConfigMap, instead of being a dedicated resource such as Applications or Projects.

As for me, not too good solution as if a developers want to add a repository, I’ll have to give him access to the “system” ConfigMap.

Also, authentication for a Git server uses Kubernetes secrets stored in an ArgoCD’s namespace, so the developer will need to have access there too.

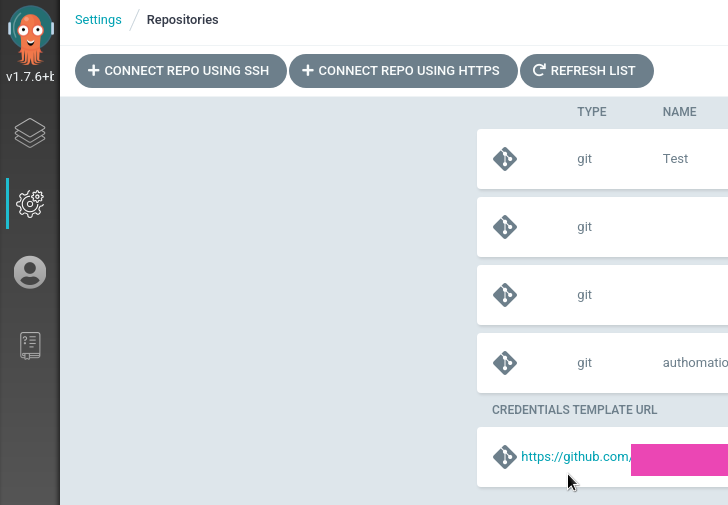

Still, ArgoCD has a way to authenticate on a Git server for different repositories by using the same authentication setting, see the Repository Credentials.

The idea behind is that we can set a “mask” for repositories, i.e https://github.com/projectname, and attach login and password or an SSK private key. Then, a developer can set a repository like https://github.com/orgname/reponame, and ArgoCD will use the github.com/projectname mask and will perform authentication on the server for the github.com/projectname/reponame repository.

In this way, we can create a “single point of authentication” and all our developers will use it for their repositories, as all our repositories are located in the same Github Organization orgname.

Repository Secret

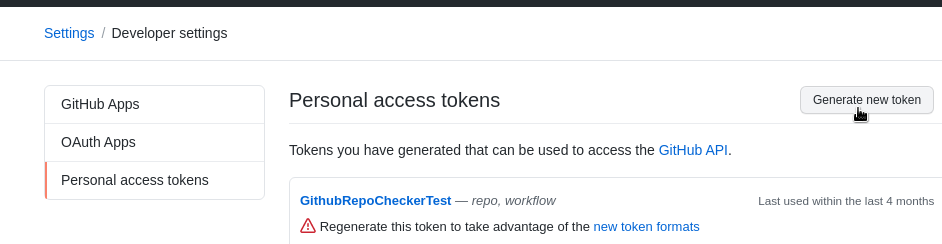

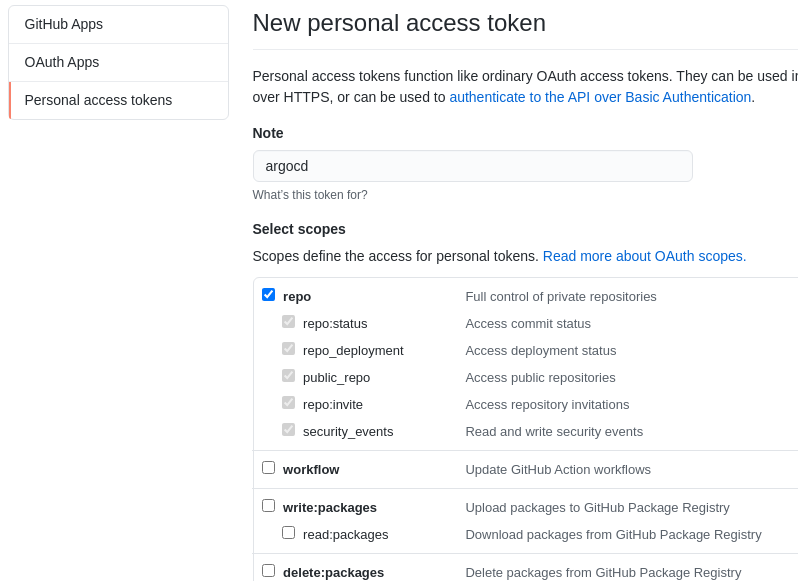

Here, we will use HTTPS and Github Access token.

Go to the Github profile and create a token (best to create a dedicated user for ArgoCD):

Give repositories permissions:

Encode the token with base64 in terminal or by using the https://www.base64encode.org:

[simterm]

$ echo -n ghp_GE***Vx911 | base64 Z2h***xMQo=

[/simterm]

And user name:

[simterm]

$ echo -n username | base64 c2V***eTI=

[/simterm]

Add a Kubernetes Secret in the ArgoCD Namespace:

apiVersion: v1 kind: Secret metadata: name: github-access namespace: dev-1-18-devops-argocd-ns data: username: c2V***eTI= password: Z2h***xMQ==

Create it:

[simterm]

$ kubectl apply -f secret.yaml secret/github-access created

[/simterm]

Add its use to the argocd-cm ConfigMap:

...

repository.credentials: |

- url: https://github.com/orgname

passwordSecret:

name: github-access

key: password

usernameSecret:

name: github-access

key: username

...

Check:

[simterm]

$ argocd repocreds list URL PATTERN USERNAME SSH_CREDS TLS_CREDS https://github.com/projectname username false false

And try to synchronize the application:

[simterm]

$ argocd app sync backend-app

...

Message: successfully synced (all tasks run)

GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE

Service dev-1-18-backend-argocdapp-test-ns test-svc Synced Progressing service/test-svc created

[/simterm]

Jenkins, Ansible, and ArgoCD

Now it’s time to think about automation.

We have our ArgoCD installed from its Helm chart with an Ansible role by using the community.kubernetes module similar to the Ansible: модуль community.kubernetes и установка Helm-чарта с ExternalDNS (Rus).

What we need to create:

- to the Ansible role add a Secret for Github

- in the ArgoCD settings need to add the

repository.credentials - create directories for Projects and Applications manifests, and in the Ansible role add

community.kubernetes.k8sthat will use those manifests. in this way, applications will be created automatically during new ArgCD instance provisioning, and developers will be able to add a new application by themself

Ansible Kubernetes Secret

In a variables file, in our case, this will be the group_vars/all.yaml, add two new variables and encrypt them with the ansible-vault. Do not forget to encode them to base64 before encrypting:

...

argocd_github_access_username: !vault |

$ANSIBLE_VAULT;1.1;AES256

63623436326661333236383064636431333532303436323735363063333264306535313934373464

...

3132663634633764360a666162616233663034366536333765666364643363336130323137613333

3636

argocd_github_access_password: !vault |

$ANSIBLE_VAULT;1.1;AES256

61393931663234653839326232383435653562333435353435333962363361643634626664643062

...

6239623265306462343031653834353562613264336230613466

...

In the role tasks, in this case roles/argocd/tasks/main.yml, add the Secret creation:

...

- name: "Create github-access Secret"

community.kubernetes.k8s:

definition:

kind: Secret

apiVersion: v1

metadata:

name: "github-access"

namespace: "{{ eks_env }}-devops-argocd-2-0-ns"

data:

username: "{{ argocd_github_access_username }}"

password : "{{ argocd_github_access_password }}"

...

In the values of the Helm chart add repository.credentials, see values.yaml:

...

- name: "Deploy ArgoCD chart to the {{ eks_env }}-devops-argocd-2-0-ns namespace"

community.kubernetes.helm:

kubeconfig: "{{ kube_config_path }}"

name: "argocd20"

chart_ref: "argo/argo-cd"

release_namespace: "{{ eks_env }}-devops-argocd-2-0-ns"

create_namespace: true

values:

...

server:

service:

type: "LoadBalancer"

loadBalancerSourceRanges:

...

config:

url: "https://{{ eks_env }}.argocd-2-0.example.com"

repository.credentials: |

- url: "https://github.com/projectname/"

passwordSecret:

name: github-access

key: password

usernameSecret:

name: github-access

key: username

...

Ansible ArgoCD Projects and Applications

Create directories to store manifest files:

[simterm]

$ mkdir -p roles/argocd/templates/{projects,applications/{backend,web}}

[/simterm]

In the roles/argocd/templates/projects/ directory create two files for two projects:

[simterm]

$ vim -p roles/argocd/templates/projects/backend-project.yaml.j2 roles/argocd/templates/projects/web-project.yaml.j2

[/simterm]

Describe the Backend Project:

apiVersion: argoproj.io/v1alpha1

kind: AppProject

metadata:

name: "backend"

namespace: "{{ eks_env }}-devops-argocd-2-0-ns"

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

description: "Backend project"

sourceRepos:

- '*'

destinations:

- namespace: "{{ eks_env }}-backend-*"

server: "https://kubernetes.default.svc"

clusterResourceWhitelist:

- group: ''

kind: Namespace

namespaceResourceWhitelist:

- group: "*"

kind: "*"

roles:

- name: "backend-app-admin"

description: "Backend team's deployment role"

policies:

- p, proj:backend:backend-app-admin, applications, *, backend/*, allow

groups:

- "Backend"

orphanedResources:

warn: true

Repeat for the Web

And create applications.

Add a roles/argocd/templates/applications/backend/backend-test-app.yaml.j2 file:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: "backend-app-1"

namespace: "{{ eks_env }}-devops-argocd-2-0-ns"

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

project: "backend"

source:

repoURL: "https://github.com/projectname/devops-kubernetes.git"

targetRevision: "DVPS-967-ArgoCD-declarative-bootstrap"

path: "tests/test-svc"

helm:

parameters:

- name: serviceType

value: LoadBalancer

destination:

server: "https://kubernetes.default.svc"

namespace: "{{ eks_env }}-backend-argocdapp-test-ns"

syncPolicy:

automated:

prune: true

selfHeal: false

syncOptions:

- Validate=true

- CreateNamespace=true

- PrunePropagationPolicy=foreground

- PruneLast=true

retry:

limit: 2

At the end of the tasks, add those manifests apply – first, Projects, then Applications:

...

- name: "Create a Backend project"

community.kubernetes.k8s:

kubeconfig: "{{ kube_config_path }}"

state: present

template: roles/argocd/templates/projects/backend-project.yaml.j2

- name: "Create a Web project"

community.kubernetes.k8s:

kubeconfig: "{{ kube_config_path }}"

state: present

template: roles/argocd/templates/projects/web-project.yaml.j2

- name: "Create Backend applications"

community.kubernetes.k8s:

kubeconfig: "{{ kube_config_path }}"

state: present

template: "{{ item }}"

with_fileglob:

- roles/argocd/templates/applications/backend/*.yaml.j2

- name: "Create Web applications"

community.kubernetes.k8s:

kubeconfig: "{{ kube_config_path }}"

state: present

template: "{{ item }}"

with_fileglob:

- roles/argocd/templates/applications/web/*.yaml.j2

For Applications use the with_fileglob to get all files from the roles/argocd/templates/applications/, as it’s planned to have dedicated files per each Application to make it easier for developers to manage them.

Jenkins

See examples in the Jenkins: миграция RTFM 2.6 – Jenkins Pipeline для Ansible and Helm: пошаговое создание чарта и деплоймента из Jenkins (both in Rus, unfortunately).

I will not describe this in details, but in short, we are using a Scripted Pipeline with a provision.ansibleApply() function call:

...

stage("Applly") {

// ansibleApply((playbookFile='1', tags='2', passfile_id='3', limit='4')

provision.ansibleApply( "${PLAYBOOK}", "${env.TAGS}", "${PASSFILE_ID}", "${EKS_ENV}")

}

...

The function is the next:

...

def ansibleApply(playbookFile='1', tags='2', passfile_id='3', limit='4') {

withCredentials([file(credentialsId: "${passfile_id}", variable: 'passfile')]) {

docker.image('projectname/kubectl-aws:4.5').inside('-v /var/run/docker.sock:/var/run/docker.sock --net=host') {

sh """

aws sts get-caller-identity

ansible-playbook ${playbookFile} --tags ${tags} --vault-password-file ${passfile} --limit ${limit}

"""

}

}

}.

...

Here, with Docker, we are spinning up a container for our image with the AWS CLI and Ansible, which is running an Ansible playbook, passes a tag, and executes necessary Ansible role.

In the playbook, we have tags set for each role, so it’s easy to execute only one role:

...

- role: argocd

tags: argocd, create-cluster

...

As the result, we have a Jenkins job with such parameters:

Run it:

Log in to the new ArgoCD instance:

[simterm]

$ argocd login dev-1-18.argocd-2-0.example.com --name [email protected]

[/simterm]

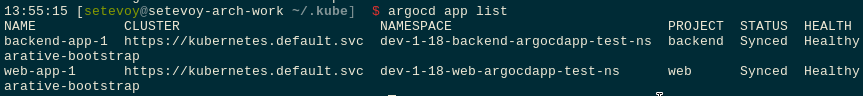

Check applications:

Done.

![]()