In the previous post ArgoCD: an overview, SSL configuration, and an application deploy we did a quick overview on how to work with the ArgoCD in general, and now let’s try to deploy a Helm chart.

In the previous post ArgoCD: an overview, SSL configuration, and an application deploy we did a quick overview on how to work with the ArgoCD in general, and now let’s try to deploy a Helm chart.

The most interesting part of this is how to enable the Helm Secrets. Had some pain with this, but finally, it’s working as expected.

Contents

ArgCD: a Helm chart deployment

Create a testing chart:

[simterm]

$ helm create test-helm-chart Creating test-helm-chart

[/simterm]

Check it locally:

[simterm]

$ helm upgrade --install --namespace dev-1-test-helm-chart-ns --create-namespace test-helm-chart-release test-helm-chart/ --debug --dry-run

...

{}

NOTES:

1. Get the application URL by running these commands:

export POD_NAME=$(kubectl get pods --namespace dev-1-test-helm-chart-ns -l "app.kubernetes.io/name=test-helm-chart,app.kubernetes.io/instance=test-helm-chart-release" -o jsonpath="{.items[0].metadata.name}")

export CONTAINER_PORT=$(kubectl get pod --namespace dev-1-test-helm-chart-ns $POD_NAME -o jsonpath="{.spec.containers[0].ports[0].containerPort}")

echo "Visit http://127.0.0.1:8080 to use your application"

kubectl --namespace dev-1-test-helm-chart-ns port-forward $POD_NAME 8080:$CONTAINER_PORT

[/simterm]

Okay – it’s working, push it to a Github repository.

ArgoCD: adding a private Github repository

Github SSH key

We have a Github organization. Later will create a dedicated Github user for ArgoCD, but for now, we can add a new RSA-key to our account.

Actually, we can configure access by using a login:token, but the key seems to be a better choice.

Generate a key:

[simterm]

$ ssh-keygen -f ~/.ssh/argocd-github-key Generating public/private rsa key pair. ...

[/simterm]

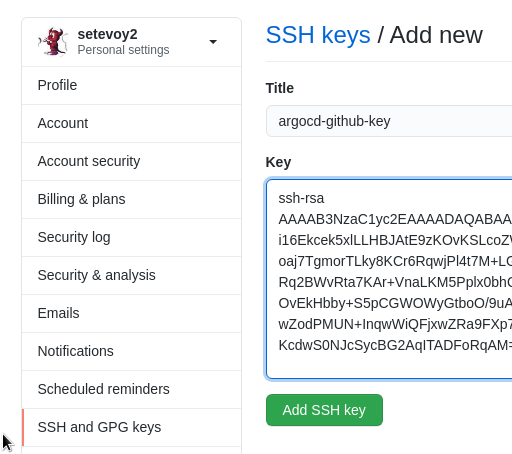

Add it to the Github – Settings > SSH keys:

ArgoCD repositories

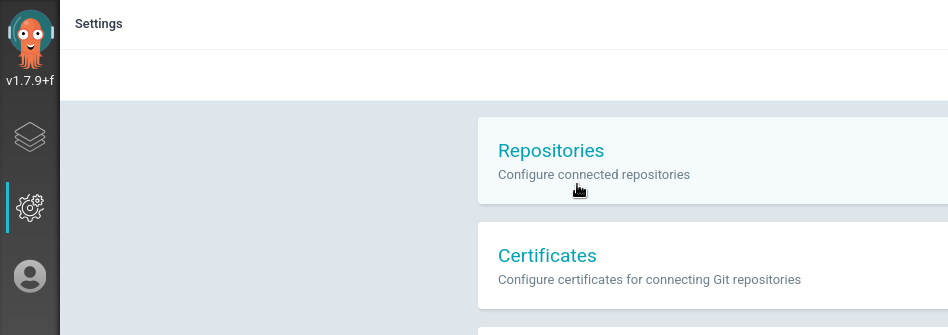

Go to the Settings – Repositories:

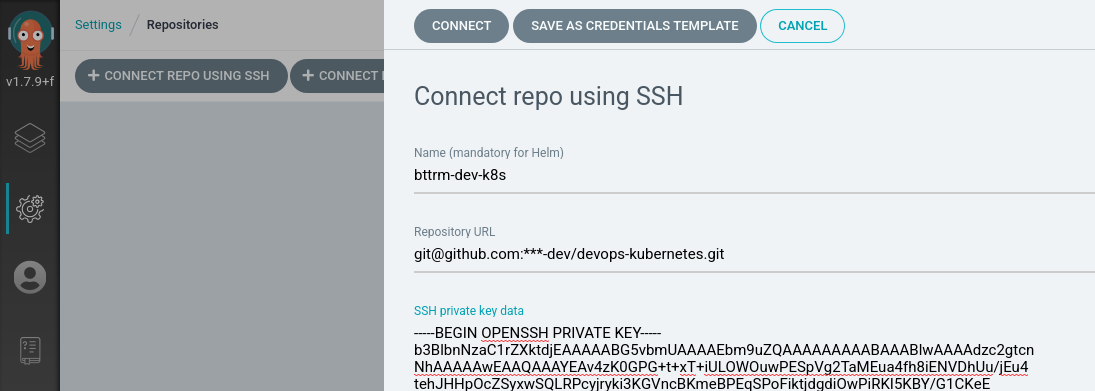

Choose Connect repo using SSH:

Set a name, URL, add the private key:

The key will be stored in a Kubernetes Secret:

[simterm]

$ kk -n dev-1-devops-argocd-ns get secrets NAME TYPE DATA AGE argocd-application-controller-token-mc457 kubernetes.io/service-account-token 3 45h argocd-dex-server-token-74r75 kubernetes.io/service-account-token 3 45h argocd-secret Opaque 5 45h argocd-server-token-54mfx kubernetes.io/service-account-token 3 45h default-token-6mmr5 kubernetes.io/service-account-token 3 45h repo-332507798 Opaque 1 13m

[/simterm]

repo-332507798 – here it is.

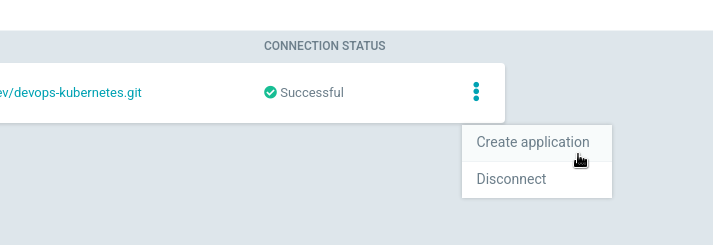

Click Connect.

Adding an ArgoCD application

Create a new application:

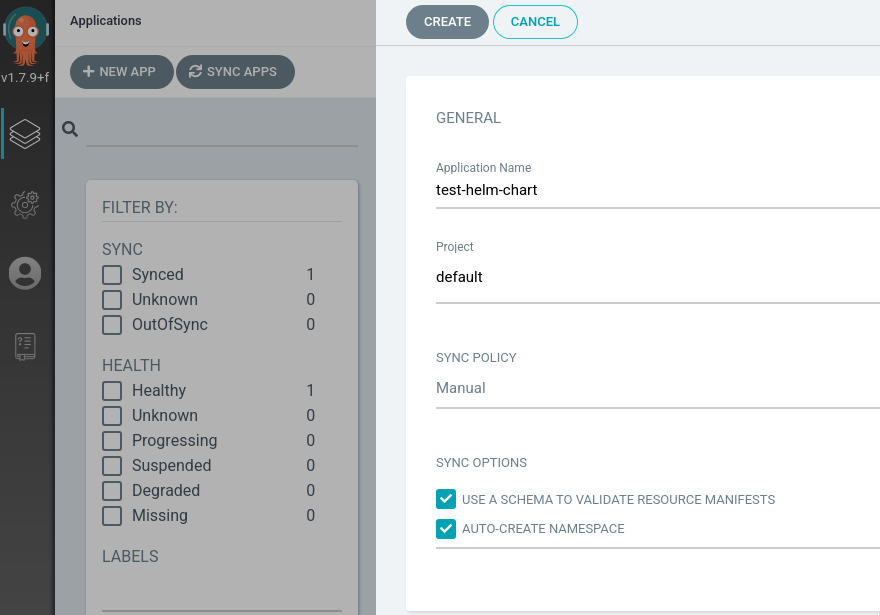

Set its name, the Project leave the default, in the Sync Policy the Auto-create namespace can be enabled:

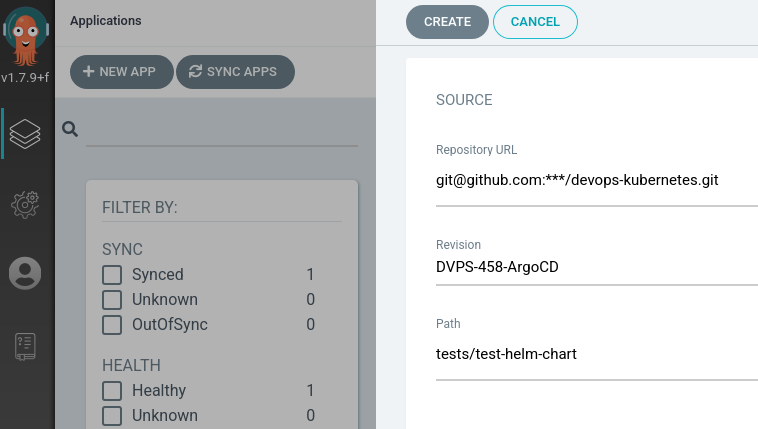

In the Source leave Git, set a repository’s URL, in the Revision specify a branch, in the Path – path to a directory with our chart.

In this current case, the repository is devops-kubernetes, chart’s directory – tests/test-helm-chart/, and ArgoCD will scan the repo and will suggest you to chose available directories inside:

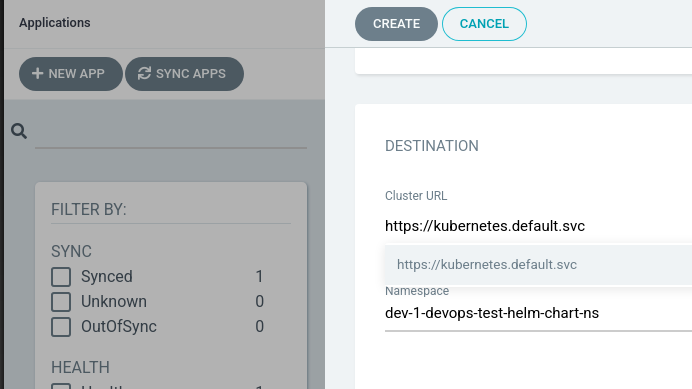

In the Destination chose local Kubernetes cluster, set a namespace to where the chart will be deployed:

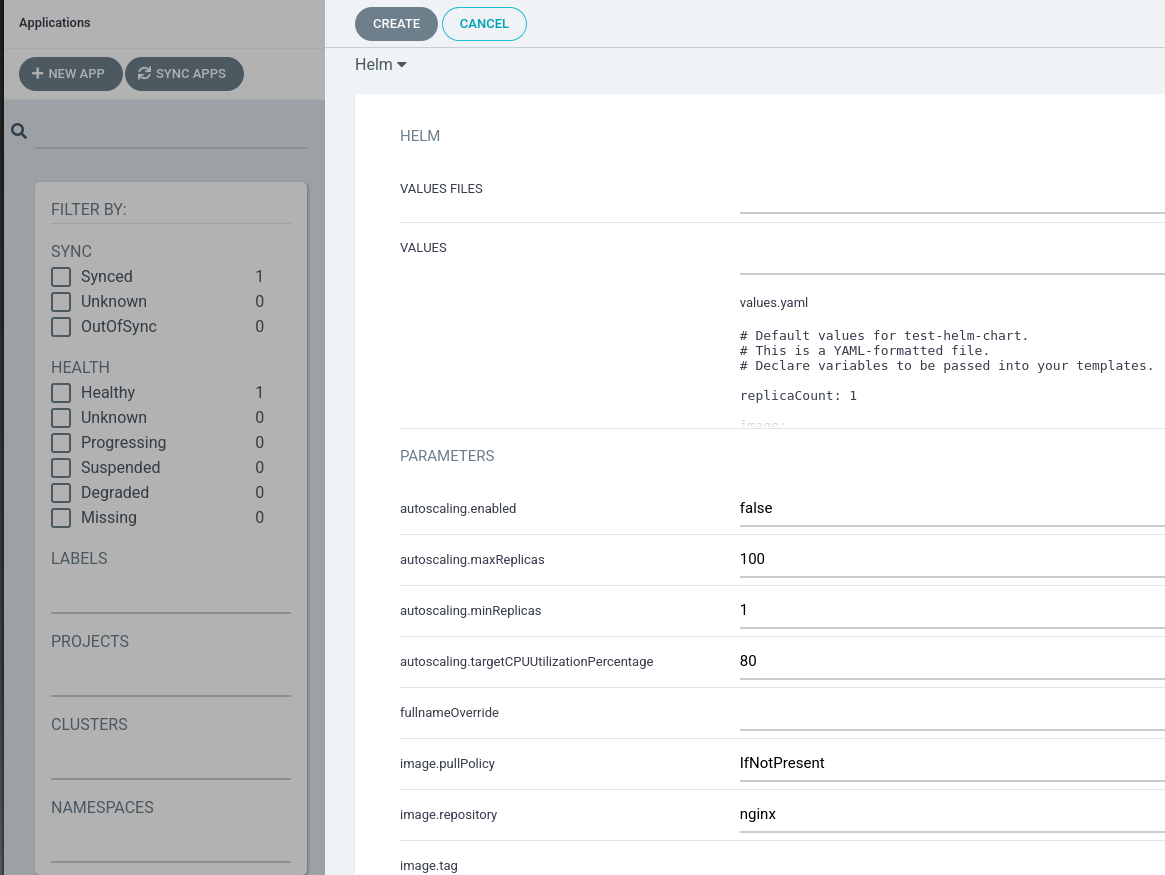

In the Destination instead of the Directory set Helm, although Argo found that this is the helm-chart directory in the repository and had set the Helm itself and already scanned the values from the values.yaml.

Can leave everything with the default values, and later we will add our secrets.yaml here:

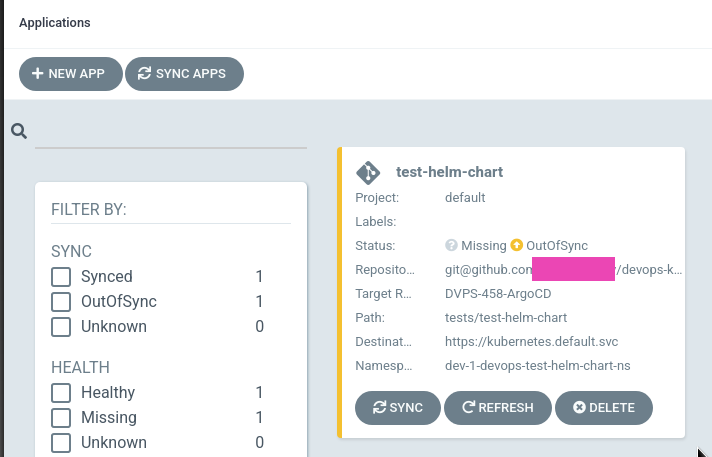

Done:

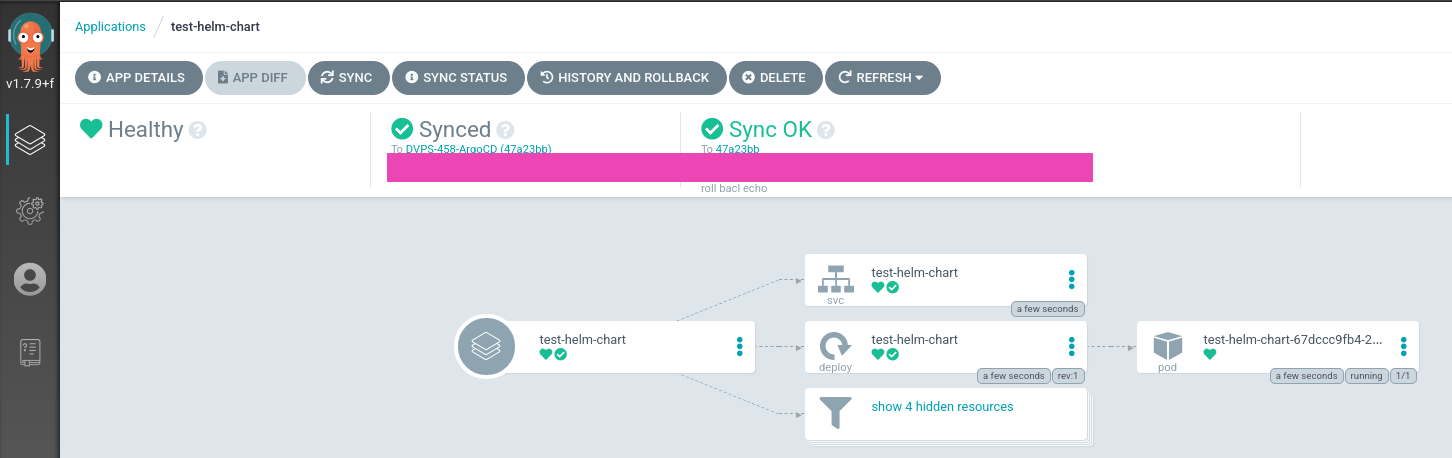

If you’ll click on the application now, you’ll see that ArgoCD already scanned the templates and created manifest to display which resources will be deployed from this Helm chart:

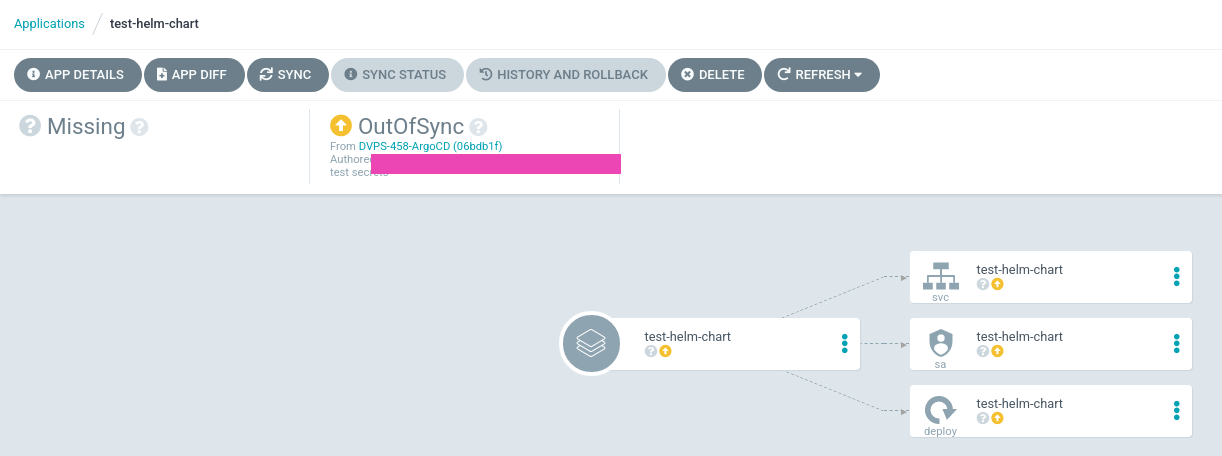

Click on the Sync, and you can see available options here like Prune and Dry Run:

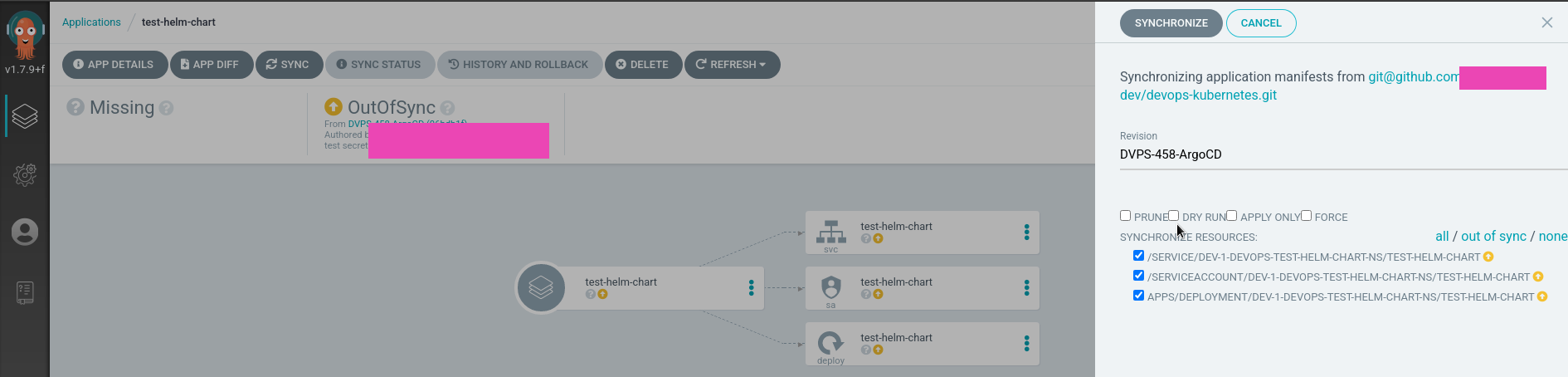

Click on the Synchronize – and the deploy is started:

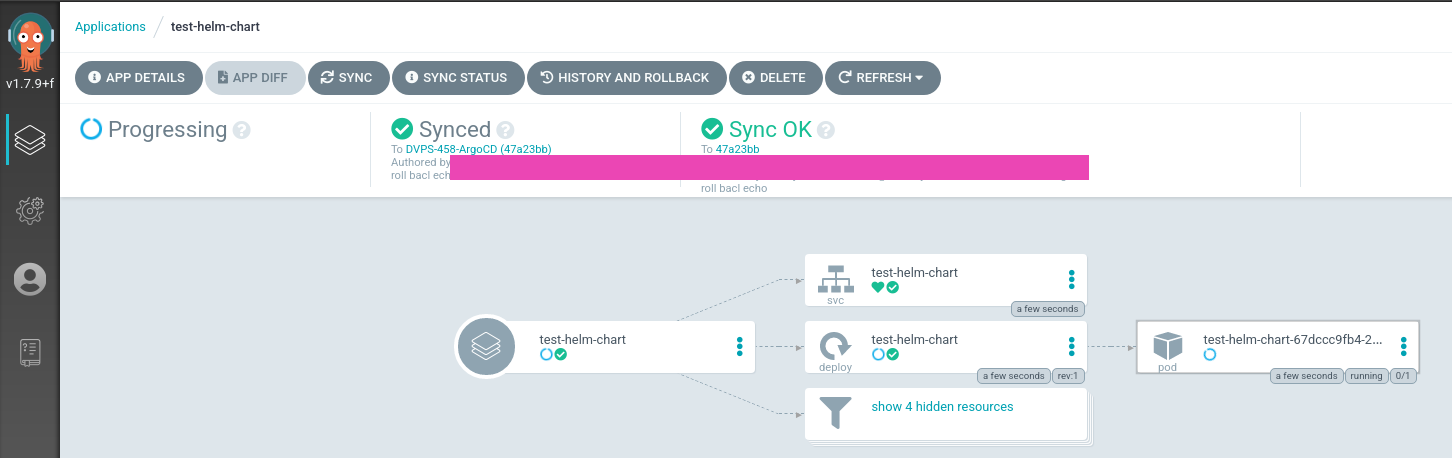

Finished – everything is up and running:

Check the applications list now:

[simterm]

$ argocd app list NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO PATH TARGET guestbook https://kubernetes.default.svc default default Synced Healthy <none> <none> https://github.com/argoproj/argocd-example-apps.git guestbook HEAD test-helm-chart https://kubernetes.default.svc dev-1-devops-test-helm-chart-ns default Synced Healthy <none> <none> [email protected]:***/devops-kubernetes.git tests/test-helm-chart DVPS-458-ArgoCD

[/simterm]

A pod in the namespace:

[simterm]

$ kubectl -n dev-1-devops-test-helm-chart-ns get pod NAME READY STATUS RESTARTS AGE test-helm-chart-67dccc9fb4-2m5rf 1/1 Running 0 2m27s

[/simterm]

And now we can go to the Helm secrets configuration.

ArgoCD and Helm Secrets

So, everything was so easy until we didn’t want to use our secrets, as Helm in the ArgoCD has no necessary plugin installed.

Available options are to build a custom Docker image with ArgoCD as per documentation here>>>, or install plugins with Kubernetes InitContainer via shared-volume as described here>>>.

InitContainer with a shared-volume

The first solution I have tried was the InitContainer with a shared-volume, and in general, it works fine – the plugin was installed.

The Deployment for the argocd-repo-server was the next:

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app.kubernetes.io/component: repo-server

app.kubernetes.io/name: argocd-repo-server

app.kubernetes.io/part-of: argocd

name: argocd-repo-server

spec:

selector:

matchLabels:

app.kubernetes.io/name: argocd-repo-server

template:

metadata:

labels:

app.kubernetes.io/name: argocd-repo-server

spec:

automountServiceAccountToken: false

initContainers:

- name: argo-tools

image: alpine/helm

command: [sh, -c]

args:

- apk add git &&

apk add curl &&

apk add bash &&

helm plugin install https://github.com/futuresimple/helm-secrets

volumeMounts:

- mountPath: /root/.local/share/helm/plugins/

name: argo-tools

containers:

- command:

- uid_entrypoint.sh

- argocd-repo-server

- --redis

- argocd-redis:6379

image: argoproj/argocd:v1.7.9

imagePullPolicy: Always

name: argocd-repo-server

ports:

- containerPort: 8081

- containerPort: 8084

readinessProbe:

initialDelaySeconds: 5

periodSeconds: 10

tcpSocket:

port: 8081

volumeMounts:

- mountPath: /app/config/ssh

name: ssh-known-hosts

- mountPath: /app/config/tls

name: tls-certs

- mountPath: /app/config/gpg/source

name: gpg-keys

- mountPath: /app/config/gpg/keys

name: gpg-keyring

- mountPath: /home/argocd/.local/share/helm/plugins/

name: argo-tools

volumes:

- configMap:

name: argocd-ssh-known-hosts-cm

name: ssh-known-hosts

- configMap:

name: argocd-tls-certs-cm

name: tls-certs

- configMap:

name: argocd-gpg-keys-cm

name: gpg-keys

- emptyDir: {}

name: gpg-keyring

- emptyDir: {}

name: argo-tools

Here is an emptyDir volume created with the argo-tools name, then an initContainer called argo-tools started with this volume attached to the /root/.local/share/helm/plugins/ directory, then git, curl, and bash are installed, and finally the helm plugin install https://github.com/futuresimple/helm-secrets is executed.

The same volume argo-tools is mounted to the argocd-repo-server pod as the /home/argocd/.local/share/helm/plugins/ directory and helm in the argocd-repo-server container can see the plugin and is able to use it.

But here is the problem: how can we execute the helm secrets install command? ArgoCD by default calls the /usr/local/bin/helm binary and there is no way to specify additional arguments to it.

So, had to use the second option – build a custom image with the helm-secrets, and sopsinstalled, and write a wrapper-script to execute the helm binary.

Building ArgoCD Docker image with the helm-secrets plugin installed

The solution was googled here – How to Handle Kubernetes Secrets with ArgoCD and Sops.

At first – need to write our wrapper script.

The script has to be called instead of the /usr/local/bin/helm binary with the template, install, upgrade, lint, and diffarguments which are known , которые понимает плагин helm-secrets, and pass the command with all arguments to the helm secrets.

After executing the helm secrets @arguments – the output is printed with deletion of the “removed ‘secrets.yaml.dec‘” string:

#! /bin/sh

# helm secrets only supports a few helm commands

if [ $1 = "template" ] || [ $1 = "install" ] || [ $1 = "upgrade" ] || [ $1 = "lint" ] || [ $1 = "diff" ]

then

# Helm secrets add some useless outputs to every commands including template, namely

# 'remove: <secret-path>.dec' for every decoded secrets.

# As argocd use helm template output to compute the resources to apply, these outputs

# will cause a parsing error from argocd, so we need to remove them.

# We cannot use exec here as we need to pipe the output so we call helm in a subprocess and

# handle the return code ourselves.

out=$(helm.bin secrets $@)

code=$?

if [ $code -eq 0 ]; then

# printf insted of echo here because we really don't want any backslash character processing

printf '%s\n' "$out" | sed -E "/^removed '.+\.dec'$/d"

exit 0

else

exit $code

fi

else

# helm.bin is the original helm binary

exec helm.bin $@

fi

The next thing is to build own Docker image withhelm-scerets and sops, and replace the /usr/local/bin/helm with our wrapper.

Find the latest SOPS version – https://github.com/mozilla/sops/releases/, and the latest version of the Helm-secrets – https://github.com/zendesk/helm-secrets/releases.

Write a Dockerfile:

FROM argoproj/argocd:v1.7.9

ARG SOPS_VERSION="v3.6.1"

ARG HELM_SECRETS_VERSION="2.0.2"

USER root

COPY helm-wrapper.sh /usr/local/bin/

RUN apt-get update --allow-insecure-repositories --allow-unauthenticated && \

apt-get install -y \

curl \

gpg && \

apt-get clean && \

rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* && \

curl -o /usr/local/bin/sops -L https://github.com/mozilla/sops/releases/download/${SOPS_VERSION}/sops-${SOPS_VERSION}.linux && \

chmod +x /usr/local/bin/sops && \

cd /usr/local/bin && \

mv helm helm.bin && \

mv helm2 helm2.bin && \

mv helm-wrapper.sh helm && \

ln helm helm2 && \

chmod +x helm helm2

# helm secrets plugin should be installed as user argocd or it won't be found

USER argocd

RUN /usr/local/bin/helm.bin plugin install https://github.com/zendesk/helm-secrets --version ${HELM_SECRETS_VERSION}

ENV HELM_PLUGINS="/home/argocd/.local/share/helm/plugins/"

Build the image – the repository below is public, so you can use the image from here.

Tag the image with the ArgoCD which was used to build it plus your own build number, here it will be the 1:

[simterm]

$ docker build -t setevoy/argocd-helm-secrets:v1.7.9-1 . $ docker push setevoy/argocd-helm-secrets:v1.7.9-1

[/simterm]

Now, need to update the install.yaml which was used to deploy the ArgoCD in the previous post.

SOPS and AWS KMS – authentification

In our case we are using a key from the AWS Key Management Service, so SOPS in the container from the setevoy/argocd-helm-secrets:v1.7.9-1 image must have access to the AWS account and this key.

SOPS requires the ~/.aws/credentials and ~/.aws/config files which we will mount to the pod from a Kubernetes Secrets.

Actually, this can be done with a ServiceAccount and an IAM role – but for now, let’s do with the files.

AWS IAM User

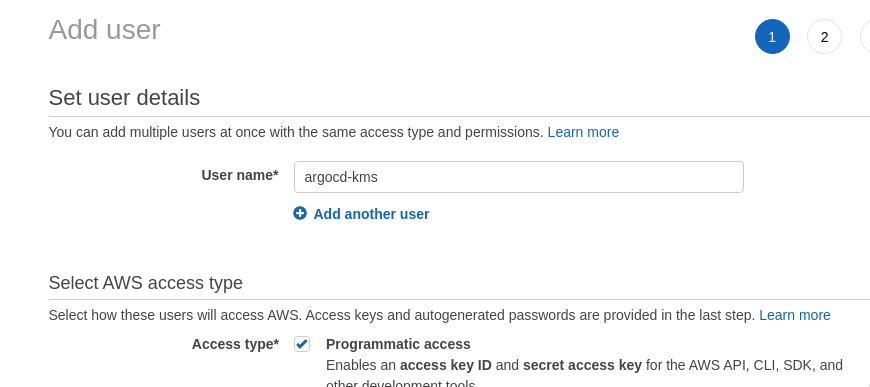

Create a dedicated AWS user to access the key – go to the AWS IAM, set it Programmatic access:

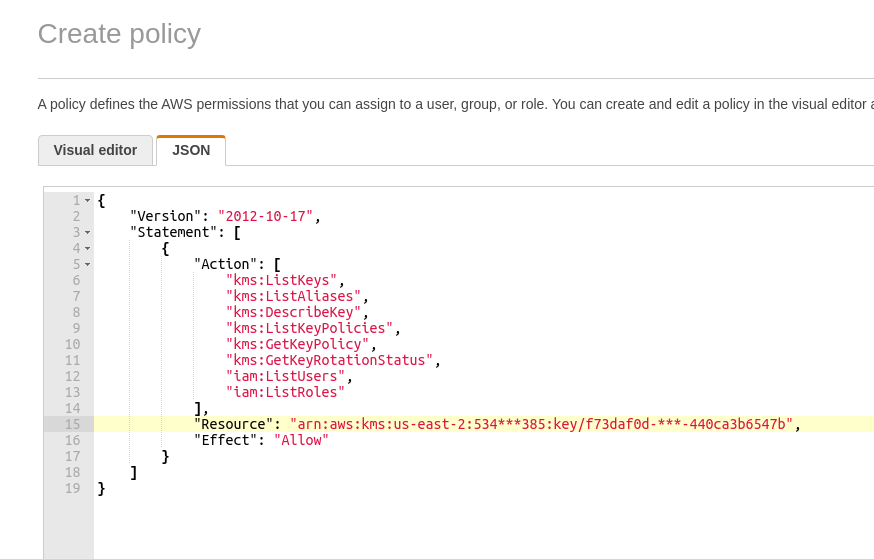

Next, create a ReadOnly IAM policy with access to only this one key to be used by SOPS:

{

"Version": "2012-10-17",

"Statement": [

{

"Action": [

"kms:ListKeys",

"kms:ListAliases",

"kms:DescribeKey",

"kms:ListKeyPolicies",

"kms:GetKeyPolicy",

"kms:GetKeyRotationStatus",

"iam:ListUsers",

"iam:ListRoles"

],

"Resource": "arn:aws:kms:us-east-2:534***385:key/f73daf0d-***-440ca3b6547b",

"Effect": "Allow"

}

]

}

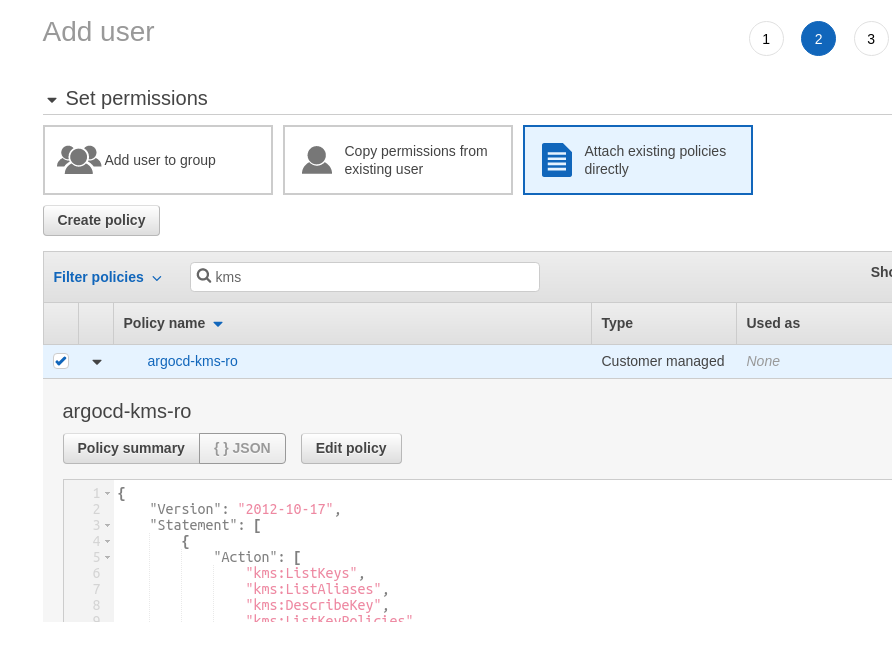

Save it and attach to the user:

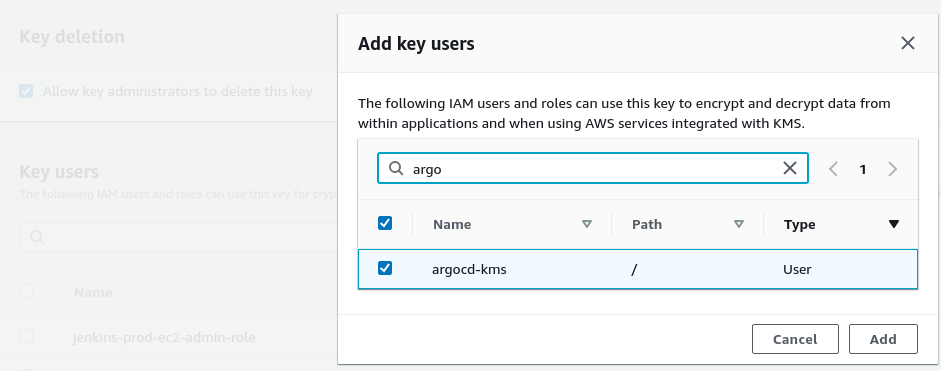

Save the user, go to the AWS KMS, add a Key User:

Configure a local AWS profile:

[simterm]

$ aws configure --profile argocd-kms AWS Access Key ID [None]: AKI***Q4F AWS Secret Access Key [None]: S7c***6ya Default region name [None]: us-east-2 Default output format [None]:

[/simterm]

Check the access:

[simterm]

$ aws --profile argocd-kms kms describe-key --key-id f73daf0d-***-440ca3b6547b

{

"KeyMetadata": {

"AWSAccountId": "534***385",

"KeyId": "f73daf0d-***-440ca3b6547b",

"Arn": "arn:aws:kms:us-east-2:534***385:key/f73daf0d-***-440ca3b6547b",

...

[/simterm]

This profile will be used to encrypt our secrets, and this profile needs to be added to the argocd-repo-server pod.

AWS credentials and config

Create a new Kubernetes Secret with the ~/.aws/credentials and ~/.aws/config content, then they will be mapped to the argocd-repo-server pod:

---

apiVersion: v1

kind: Secret

metadata:

name: argocd-aws-credentials

namespace: dev-1-devops-argocd-ns

type: Opaque

stringData:

credentials: |

[argocd-kms]

aws_access_key_id = AKI***Q4F

aws_secret_access_key = S7c***6ya

config: |

[profile argocd-kms]

region = us-east-2

Add the file to the .gitignore:

[simterm]

$ cat .gitignore argocd-aws-credentials.yaml

[/simterm]

Later, when will do an automation for the ArgoCD roll-out, this file can be created from the Jenkins Secrets.

Create the Secret:

[simterm]

$ kubectl apply -f argocd-aws-credentials.yaml secret/argocd-aws-credentials created

[/simterm]

Update the Deployment argocd-repo-server – change the image to be used, add a new volume from our Secret, and mount it as /home/argocd/.aws to the pod with Argo:

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app.kubernetes.io/component: repo-server

app.kubernetes.io/name: argocd-repo-server

app.kubernetes.io/part-of: argocd

name: argocd-repo-server

spec:

selector:

matchLabels:

app.kubernetes.io/name: argocd-repo-server

template:

metadata:

labels:

app.kubernetes.io/name: argocd-repo-server

spec:

automountServiceAccountToken: false

containers:

- command:

- uid_entrypoint.sh

- argocd-repo-server

- --redis

- argocd-redis:6379

# image: argoproj/argocd:v1.7.9

image: setevoy/argocd-helm-secrets:v1.7.9-1

imagePullPolicy: Always

name: argocd-repo-server

ports:

- containerPort: 8081

- containerPort: 8084

readinessProbe:

initialDelaySeconds: 5

periodSeconds: 10

tcpSocket:

port: 8081

volumeMounts:

- mountPath: /app/config/ssh

name: ssh-known-hosts

- mountPath: /app/config/tls

name: tls-certs

- mountPath: /app/config/gpg/source

name: gpg-keys

- mountPath: /app/config/gpg/keys

name: gpg-keyring

- mountPath: /home/argocd/.aws

name: argocd-aws-credentials

volumes:

- configMap:

name: argocd-ssh-known-hosts-cm

name: ssh-known-hosts

- configMap:

name: argocd-tls-certs-cm

name: tls-certs

- configMap:

name: argocd-gpg-keys-cm

name: gpg-keys

- emptyDir: {}

name: gpg-keyring

- name: argocd-aws-credentials

secret:

secretName: argocd-aws-credentials

Update the ArgoCD instance:

[simterm]

$ kubectl -n dev-1-devops-argocd-ns apply -f install.yaml

[/simterm]

Check pods:

[simterm]

$ kubectl -n dev-1-devops-argocd-ns get pod NAME READY STATUS RESTARTS AGE ... argocd-repo-server-64f4bbf4b7-jcs6x 1/1 Terminating 0 19h argocd-repo-server-7c64775679-9jjq2 1/1 Running 0 12s

[/simterm]

Check files:

[simterm]

$ kubectl -n dev-1-devops-argocd-ns exec -ti argocd-repo-server-7c64775679-9jjq2 -- cat /home/argocd/.aws/credentials [argocd-kms] aws_access_key_id = AKI***Q4F aws_secret_access_key = S7c***6ya

[/simterm]

And let’s try to use the helm-secrets.

Adding secrets.yaml

In a repository with the chart create a new secrets.yaml file:

somePassword: secretValue

Create a .sops.yamlfile with the KMS key and AWS profile:

---

creation_rules:

- kms: 'arn:aws:kms:us-east-2:534****385:key/f73daf0d-***-440ca3b6547b'

aws_profile: argocd-kms

Encrypt the file:

[simterm]

$ helm secrets enc secrets.yaml Encrypting secrets.yaml Encrypted secrets.yaml

[/simterm]

To the testing chart add our secret’s usage, for example – let’s create an environment variable called TEST_SECRET_PASSWORD – update the templates/deployment.yaml:

...

containers:

- name: {{ .Chart.Name }}

securityContext:

{{- toYaml .Values.securityContext | nindent 12 }}

image: "{{ .Values.image.repository }}:{{ .Values.image.tag | default .Chart.AppVersion }}"

imagePullPolicy: {{ .Values.image.pullPolicy }}

env:

- name: TEST_SECRET_PASSWORD

value: {{ .Values.somePassword }}

...

Push changes to the repository:

[simterm]

$ git add secrets.yaml templates/deployment.yaml $ git commit -m "test secret added" && git push

[/simterm]

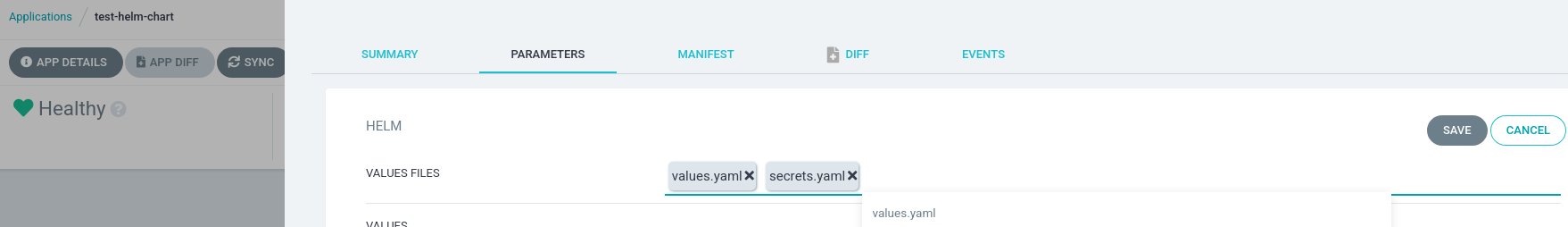

Go to the application’s settings – App Details > Parameters, click Edit and specify the values.yaml and secrets.yaml as the Values Files:

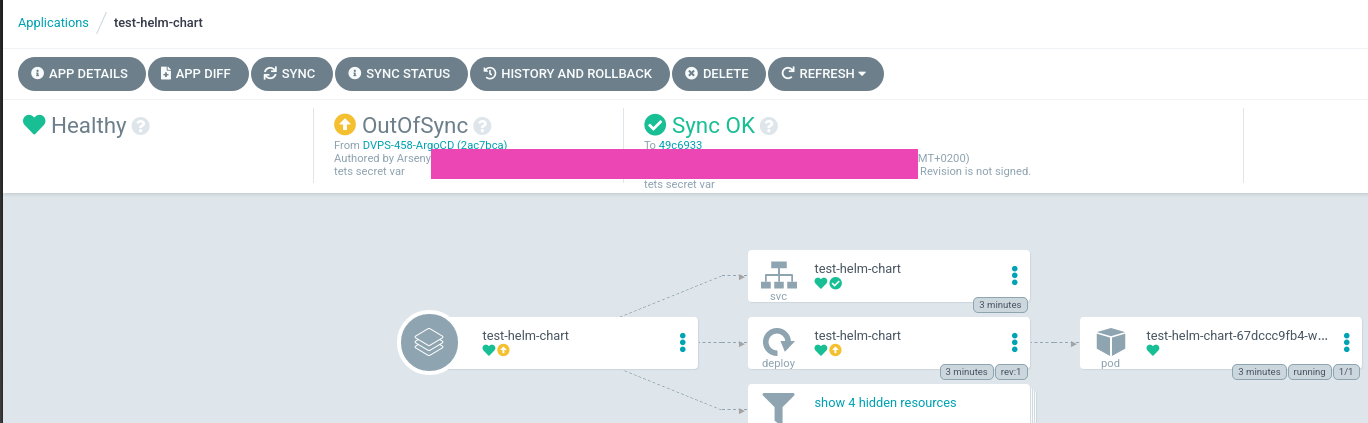

ArgoCD sees now that the Application is not synchronized with the data in the repository:

Sync it:

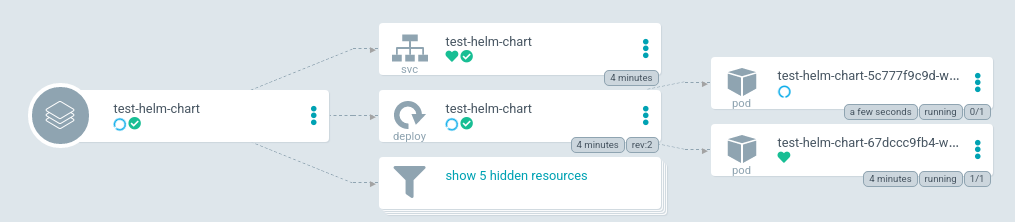

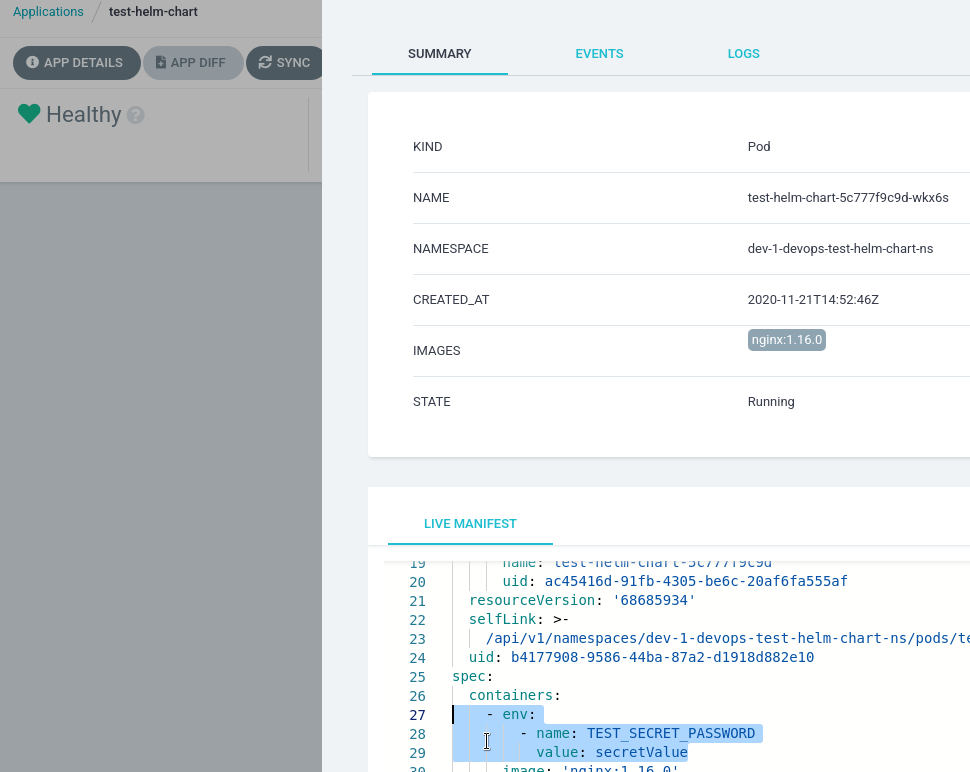

Check the new pod:

And data directly in the pod:

[simterm]

$ kubectl -n dev-1-devops-test-helm-chart-ns exec -ti test-helm-chart-5c777f9c9d-wkx6s -- printenv | grep SECRET TEST_SECRET_PASSWORD=secretValue

[/simterm]

All done – the secret is here.

![]()