One of the goals of the ArgoCD implementation in our project is to use new Deployment Strategies for our applications.

One of the goals of the ArgoCD implementation in our project is to use new Deployment Strategies for our applications.

In this post, we will observe deployment types in Kubernetes, how Deployment is working in Kubernetes and a quick example of the Argo Rollouts.

Contents

Deployment Strategies and Kubernetes

Let’s take a short overview of the deployment strategies which are used in Kubernetes.

Out of the box, Kubernetes has two main types of the .spec.strategy.type – the Recreate and RollingUpdate, which is the default one.

Also, you can realize a kind of Canary and Blue-Green deployments, although with some limitations.

Documentation is here>>>.

Recreate

The most simple and straightforward type: during such a deployment, Kubernetes will stop all existing pods and then will spin up a new set.

Obviously, you’ll have some downtime during this, as first old pods need to be stopped (see the Pod Lifecycle – Termination of Pods post for details), and only then new will be created, and then they needs to pass Readiness checks, and during this process, your application will be unavailable for users.

Makes sense to b used if your application can not work with different versions at the same time, for example due to limitations of its databases.

An example of such a deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-deploy

spec:

replicas: 2

selector:

matchLabels:

app: hello-pod

version: "1.0"

strategy:

type: Recreate

template:

metadata:

labels:

app: hello-pod

spec:

containers:

- name: hello-pod

image: nginxdemos/hello

ports:

- containerPort: 80

Deploy its version: "1.0":

[simterm]

$ kubectl apply -f deployment.yaml deployment.apps/hello-deploy created

[/simterm]

Check pods:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-77bcf495b7-b2s2x 1/1 Running 0 9s hello-deploy-77bcf495b7-rb8cb 1/1 Running 0 9s

[/simterm]

Update its label to the version: "2.0", redeploy and check again:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-dd584d88d-vv5bb 0/1 Terminating 0 51s hello-deploy-dd584d88d-ws2xp 0/1 Terminating 0 51s

[/simterm]

Both pods were killed, only after this, new pods will be created:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-d6c989569-c67vt 1/1 Running 0 27s hello-deploy-d6c989569-n7ktz 1/1 Running 0 27s

[/simterm]

Rolling Update

The RollingUpdate is a bit more interesting: here, Kubernetes will run new pods in parallel with the old, then will kill the old version and will leave new. Thus, during deploy some time will work both old and new versions of an application. Is the default type of Deployments.

With this approach, we have zero downtime as during an update we have both versions running.

Still, there are situations when this approach can not be applied, for example, if during start, pods will run MySQL migrations that will update a database schema in such a way, that the old application’s version can’t use.

An example of such deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-deploy

spec:

replicas: 2

selector:

matchLabels:

app: hello-pod

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 0

maxSurge: 1

template:

metadata:

labels:

app: hello-pod

version: "1.0"

spec:

containers:

- name: hello-pod

image: nginxdemos/hello

ports:

- containerPort: 80

here, we’ve changed the strategy.type: Recreate to the type: RollingUpdate, and added two optional fields that will define a Deployment behavior during an update:

maxUnavailable: how many pods from thereplicascan be killed to run new ones. Can be set as a number or percent.maxSurge: how many pods can be created over the value from thereplicas. Can be set as a number or percent.

In the sample above, we’ve set zero in the maxUnavailable, i.e. we don’t want to stop any existing pods until new will be started, and maxSurge is set to 1, so during an update, Kubernetes will create one additional pod, and when it will be in the Running state, Kubernetes will drop one old pod.

Deploy with the 1.0 version:

[simterm]

$ kubectl apply -f deployment.yaml deployment.apps/hello-deploy created

[/simterm]

Got two pods running:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-dd584d88d-84qk4 0/1 ContainerCreating 0 3s hello-deploy-dd584d88d-cgc5v 1/1 Running 0 3s

[/simterm]

Update the version to 2.0, deploy it again, and check pods:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-d6c989569-dkz7d 0/1 ContainerCreating 0 3s hello-deploy-dd584d88d-84qk4 1/1 Running 0 55s hello-deploy-dd584d88d-cgc5v 1/1 Running 0 55s

[/simterm]

We got one additional pod beyond the value of the replicas.

Kubernetes Canary Deployment

The Canary type implies creating new pods in parallel with old ones, in the same way as the Rolling Update does, but gives a bit more control over the update process.

After running a new version of an application, some part of new requests will be routed to it, and some part will proceed using the old version.

If the new version is working well, then the rest of the users will be switched to the new one and old pods will be deleted.

The Canary type isn’t included to the .spec.strategy.type, but it can be realized without new controllers by Kubernetes itself.

In doing so, the solution will be rudimentary and complicated in management.

Still, we can do it by creating two Deployments with different versions on an application, but both will be using a Service with the same set of labels in its selector.

So, let’s create such a Deployments and a Service with the LoadBalancer type.

In the Deployment-1 set replicas=2, and for the Deployment-2 – 0:

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-deploy-1

spec:

replicas: 2

selector:

matchLabels:

app: hello-pod

template:

metadata:

labels:

app: hello-pod

version: "1.0"

spec:

containers:

- name: hello-pod

image: nginxdemos/hello

ports:

- containerPort: 80

lifecycle:

postStart:

exec:

command: ["/bin/sh", "-c", "echo 1 > /usr/share/nginx/html/index.html"]

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-deploy-2

spec:

replicas: 0

selector:

matchLabels:

app: hello-pod

template:

metadata:

labels:

app: hello-pod

version: "2.0"

spec:

containers:

- name: hello-pod

image: nginxdemos/hello

ports:

- containerPort: 80

lifecycle:

postStart:

exec:

command: ["/bin/sh", "-c", "echo 2 > /usr/share/nginx/html/index.html"]

---

apiVersion: v1

kind: Service

metadata:

name: hello-svc

spec:

type: LoadBalancer

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

app: hello-pod

With the postStart let’s rewrite an NGINX’s index file so we can see which pod accepted a request.

Deploy it:

[simterm]

$ kubectl apply -f deployment.yaml deployment.apps/hello-deploy-1 created deployment.apps/hello-deploy-2 created service/hello-svc created

[/simterm]

Check pods:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-1-dd584d88d-25rbx 1/1 Running 0 71s hello-deploy-1-dd584d88d-9xsng 1/1 Running 0 71s

[/simterm]

Currently, the Service will route all the traffic to pods from the Deployment-1:

[simterm]

$ curl adb469658008c41cd92a93a7adddd235-1170089858.us-east-2.elb.amazonaws.com 1 $ curl adb469658008c41cd92a93a7adddd235-1170089858.us-east-2.elb.amazonaws.com 1

[/simterm]

Now, we can update the Deployment-2 and set replicas: 1:

[simterm]

$ kubectl patch deployment.v1.apps/hello-deploy-2 -p '{"spec":{"replicas": 1}}'

deployment.apps/hello-deploy-2 patched

[/simterm]

We’ve got the pods running with the same label app=hello-pod:

[simterm]

$ kubectl get pod -l app=hello-pod NAME READY STATUS RESTARTS AGE hello-deploy-1-dd584d88d-25rbx 1/1 Running 0 3m2s hello-deploy-1-dd584d88d-9xsng 1/1 Running 0 3m2s hello-deploy-2-d6c989569-x2lsb 1/1 Running 0 6s

[/simterm]

And our Service will route 70% of the traffic to pods from the Deployment-1, and the rest to the pods from the Deployment-2:

[simterm]

$ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 2 $ curl adb***858.us-east-2.elb.amazonaws.com 1 $ curl adb***858.us-east-2.elb.amazonaws.com 2

[/simterm]

After we will check that version 2.0 is working and have no errors, we can delete the old version and scale up the new one to 2 pods.

Kubernetes Blue/Green Deployment

Also, in the same way, we can create a kind of blue-green deployment when we have an old (green) version, and a new (blue) version, but all traffic will be sent to the new. If we will get errors on the new version, we can easily swatch it back to the previous version.

To do so, let’s update the .spec.selector field of the Service to chose pods only from the first, “green”, deployment by using the label version:

...

selector:

app: hello-pod

version: "1.0"

Redeploy and check:

[simterm]

14:34:18 [setevoy@setevoy-arch-work ~/Temp] $ curl adb***858.us-east-2.elb.amazonaws.com 1 14:34:18 [setevoy@setevoy-arch-work ~/Temp] $ curl adb***858.us-east-2.elb.amazonaws.com 1

[/simterm]

Now, change the selector to the version: 2 to switch traffic to the “blue” version:

[simterm]

$ kubectl patch services/hello-svc -p '{"spec":{"selector":{"version": "2.0"}}}'

service/hello-svc patched

[/simterm]

Check it:

[simterm]

$ curl adb***858.us-east-2.elb.amazonaws.com 2 $ curl adb***858.us-east-2.elb.amazonaws.com 2

[/simterm]

After everything is working, we can drop the old version and the “blue” one will become “green”.

When using Canary and Blue-Green deployments with the solutions described above, we’ve got a bunch of issues: need to manage various Deployments, check their versions and statutes, check for errors, etc.

Instead, we can do the same with Istio or ArgoCD.

Istio will be discussed later, and at this time let’s take a look at how this can be done with ArgoCD.

Deployment and ReplicaSet

Before going forward, let’s see how Deployment and updates are working in Kubernetes.

So, Deployment is a Kubernetes type where we can describe a template to create new pods.

After creating such a Deployment, under the hood, it will create a ReplicaSet object which manages pods from this Deployment.

During a Deployment update, it will create another ReplicaSet with a new configuration, and this ReplicaSet in its turn will create new pods:

Each pod created by a Deployment has a ReplicaSet link, and this ReplicaSet has a link to a corresponding Deployment.

Check the pod:

[simterm]

$ kubectl describe pod hello-deploy-d6c989569-96gqc Name: hello-deploy-d6c989569-96gqc ... Labels: app=hello-pod ... Controlled By: ReplicaSet/hello-deploy-d6c989569 ...

[/simterm]

This pod is Controlled By: ReplicaSet/hello-deploy-d6c989569, check this ReplicaSet:

[simterm]

$ kubectl describe replicaset hello-deploy-d6c989569 ... Controlled By: Deployment/hello-deploy ...

[/simterm]

Here is our Deployment – Controlled By: Deployment/hello-deploy.

And the ReplicaSet template for pods is just the content of the spec.template of its Deployment:

[simterm]

$ kubectl describe replicaset hello-deploy-2-8878b4b

...

Pod Template:

Labels: app=hello-pod

pod-template-hash=8878b4b

version=2.0

Containers:

hello-pod:

Image: nginxdemos/hello

Port: 80/TCP

Host Port: 0/TCP

Environment: <none>

Mounts: <none>

Volumes: <none>

Events: <none>

[/simterm]

Now, let’s go to the Argo Rollouts.

Argo Rollouts

Documentation – https://argoproj.github.io/argo-rollouts.

Argo Rollouts is another Kubernetes Controller and a set of Kubernetes Custom Resource Definitions, that together allow creating more complicated deployments to Kubernetes.

it can be used alone or can be integrated with Ingress Controllers such as NGINX and AWS ALB Controller, or with various Service Mesh solutions like Istio.

During a deployment, Argo Rollouts can perform checks of the new application’s version and run rollback in case of issues.

To use Argo Rollouts instead of Deployment we will create a new type – Rollout, where in the spec.strategy a deployment type and parameters will be defined, for example:

...

spec:

replicas: 5

strategy:

canary:

steps:

- setWeight: 20

...

The rest of its fields are the same as the common Kubernetes Deployment.

In the same way as Deployment, Rollout uses ReplicaSet to spin up new pods.

During this, after installing Argo Rollouts you still can use a standard Deployment with their spec.strategy and new Rollout. Also, you can easily migrate existing Deployments to Rollouts, see Convert Deployment to Rollout.

See also Architecture, Rollout Specification и Updating a Rollout.

Install Argo Rollouts

Create a dedicated Namespace:

[simterm]

$ kubectl create namespace argo-rollouts namespace/argo-rollouts created

[/simterm]

Deploy necessary Kubernetes CRD, ServiceAccount, ClusterRoles, and Deployment from the manifest file:

[simterm]

$ kubectl apply -n argo-rollouts -f https://raw.githubusercontent.com/argoproj/argo-rollouts/stable/manifests/install.yaml

[/simterm]

Later, when we will install it in Production, the Argo Rollouts Helm chart can be used.

Check a pod – it’s the Argo Rollouts controller:

[simterm]

$ kubectl -n argo-rollouts get pod NAME READY STATUS RESTARTS AGE argo-rollouts-6ffd56b9d6-7h65n 1/1 Running 0 30s

[/simterm]

kubectl plugin

Install a plugin for the kubectl:

[simterm]

$ curl -LO https://github.com/argoproj/argo-rollouts/releases/latest/download/kubectl-argo-rollouts-linux-amd64 $ chmod +x ./kubectl-argo-rollouts-linux-amd64 $ sudo mv ./kubectl-argo-rollouts-linux-amd64 /usr/local/bin/kubectl-argo-rollouts

[/simterm]

Check it:

[simterm]

$ kubectl argo rollouts version kubectl-argo-rollouts: v1.0.0+912d3ac BuildDate: 2021-05-19T23:56:53Z GitCommit: 912d3ac0097a5fc24932ceee532aa18bcc79944d GitTreeState: clean GoVersion: go1.16.3 Compiler: gc Platform: linux/amd64

[/simterm]

An application’s deploy

Deploy a test application:

[simterm]

$ kubectl apply -f https://raw.githubusercontent.com/argoproj/argo-rollouts/master/docs/getting-started/basic/rollout.yaml rollout.argoproj.io/rollouts-demo created

[/simterm]

Check it:

[simterm]

$ kubectl get rollouts rollouts-demo -o yaml

apiVersion: argoproj.io/v1alpha1

kind: Rollout

...

spec:

replicas: 5

revisionHistoryLimit: 2

selector:

matchLabels:

app: rollouts-demo

strategy:

canary:

steps:

- setWeight: 20

- pause: {}

- setWeight: 40

- pause:

duration: 10

- setWeight: 60

- pause:

duration: 10

- setWeight: 80

- pause:

duration: 10

template:

metadata:

creationTimestamp: null

labels:

app: rollouts-demo

spec:

containers:

- image: argoproj/rollouts-demo:blue

name: rollouts-demo

ports:

- containerPort: 8080

name: http

protocol: TCP

...

[/simterm]

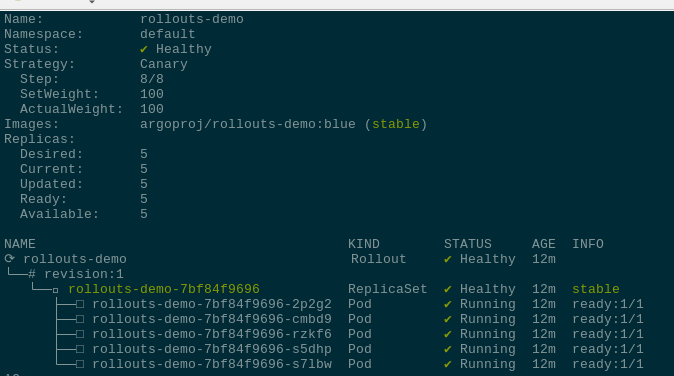

Here, in the spec.strategy the Canary deployment type is used, where in a set of steps will be performed an upgrade of the pods: first, 20% of exiting pods will be replaced with the new version, then a pause to check if they are working, then update 40%, pause again, and so on until all pods will be upgraded.

By using the plugin installed above, we can add the --watch argument to see the upgrade process in real-time:

[simterm]

$ kubectl argo rollouts get rollout rollouts-demo --watch

Install a test Service:

[simterm]

$ kubectl apply -f https://raw.githubusercontent.com/argoproj/argo-rollouts/master/docs/getting-started/basic/service.yaml service/rollouts-demo created

[/simterm]

And make an update by setting a new image version:

[simterm]

$ kubectl argo rollouts set image rollouts-demo rollouts-demo=argoproj/rollouts-demo:yellow

[/simterm]

Check the progress:

![]()