We are using AWS VPC DNS and sometimes facing with errors like “php_network_getaddresses: getaddrinfo failed: Temporary failure in name resolution“.

We are using AWS VPC DNS and sometimes facing with errors like “php_network_getaddresses: getaddrinfo failed: Temporary failure in name resolution“.

The only advice from AWS tech. support was to configure a local dnsmasq service to act as a local DNS cache, but I did this already year ago and this issue happens once in 1-2-3 months.

Although this post is more about dnsmasq configuration – a couple of possible causes at this moment:

- a local

dnsmasqfails in some reasons and goes down, then all requests are sent to an AWS VPC DNS service and fill the hard-limit in 1024 requests per second, it fails too and we have our error - maybe reached an EC2’s network interface packages per second limit, see the following posts: Packets-per-second limits in EC2, EC2 Packets per Second: Guaranteed Throughput vs Best Effort

The second one seems not too real for me at this moment, and to debug the dnsmasq – let’s take a look at its logging and debugging options to see if we have any issues there, for example with cache size.

Contents

Prometheus dnsmasq metrics

The very first thing we did after agreed with the assumption that the issue can be caused by the failure of the dnsmasq – added it under our Prometheus monitoring with en dnsmasq_exporter service.

The exporter collects next metrics:

...

Question: []dns.Question{

question("cachesize.bind."),

question("insertions.bind."),

question("evictions.bind."),

question("misses.bind."),

question("hits.bind."),

question("auth.bind."),

question("servers.bind."),

},

...

You can grab them yourself using Chaos DNS request type (see DNS classes) with the dig utility.

For example, to get DNS upstream servers list which will be used by our dnsmasq to forward requests too if a domain wasn’t found in the local dnsmasq‘s cache:

[simterm]

root@bttrm-production-console:/home/admin# dig @localhost +short chaos txt servers.bind "10.0.3.2#53 9301 0" "1.1.1.1#53 402 0"

[/simterm]

And check local resolv.conf:

[simterm]

root@bttrm-production-console:/home/admin# cat /etc/resolv.conf domain us-east-2.compute.internal search us-east-2.compute.internal nameserver 127.0.0.1 nameserver 10.0.3.2 nameserver 1.1.1.1

[/simterm]

Well, that’s it – dnsmasq uses them: 10.0.3.2 – is a VPC DNS, 1.1.1.1 – CloudFlare DNS for fallback in case if VPC DNS did not respond (although this didn’t help too much as we can see).

The data here are:

cachesize.bind: cache size (how many domains will be kept in the cache)insertions.bind: number of domains added to the cacheevictions.bind: number of domains removed from the cache (because of a domain’s TTL or if the cache is exhausted)misses.bind: number of requests which wasn’t found in the cache and were forwarded to upstream servershits.bind: number of requests served by thednsmasqfrom its cacheauth.bind: number of requests to authority zones (dnsmasqcan be used as an SOA host)servers.bind: already reviewed above – servers to forward unknown domains to

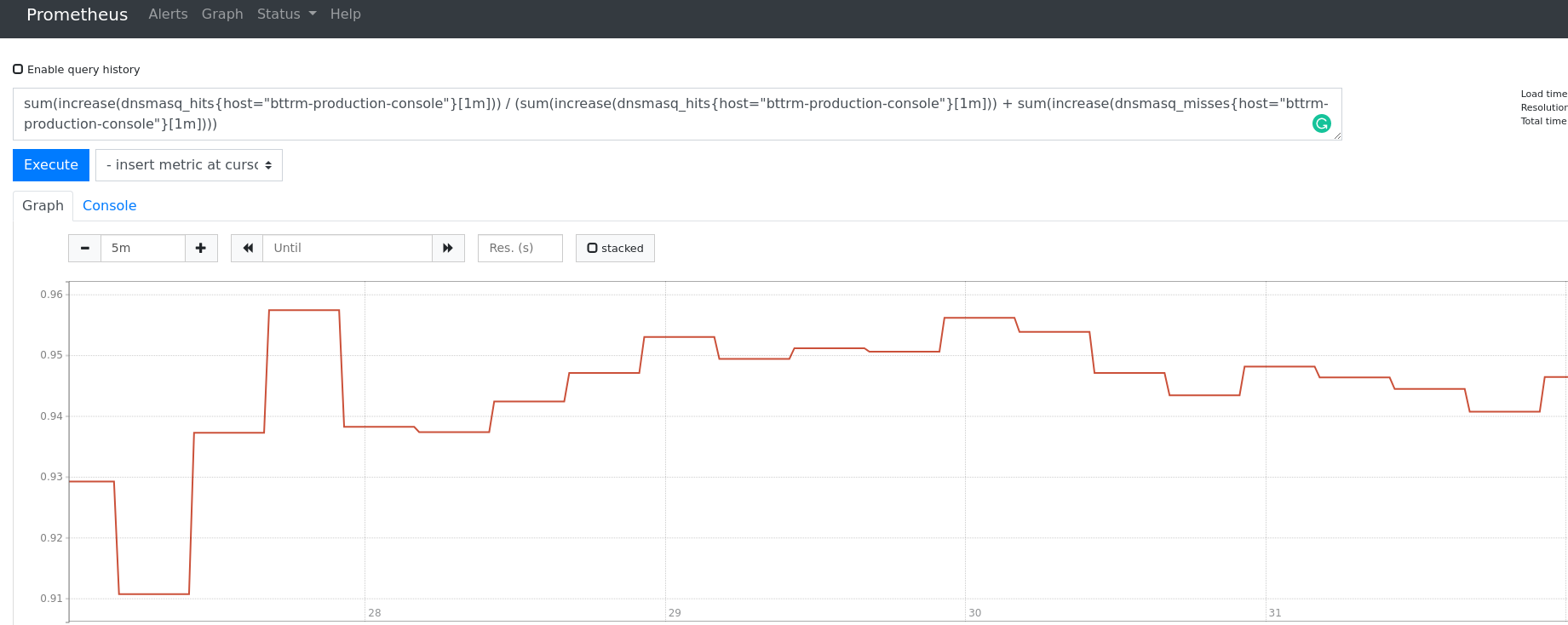

Using those metrics we can build a graph, for example – hit ratio between overall requests and requests, served by the dnsmasq‘s cache:

sum(increase(dnsmasq_hits[1m])) / (sum(increase(dnsmasq_hits[1m])) + sum(increase(dnsmasq_misses[1m])))

Or by one host:

sum(increase(dnsmasq_hits{host="bttrm-production-console"}[1m])) / (sum(increase(dnsmasq_hits{host="bttrm-production-console"}[1m])) + sum(increase(dnsmasq_misses{host="bttrm-production-console"}[1m])))

Here we respond to 98 from 100 requests by using cache – not bad.

dnsmasq debugging

The dnsmasq can be started in debugging with -d (–no-daemon) and -q (–log-queries) options:

[simterm]

$ sudo dnsmasq -q -d dnsmasq: started, version 2.80 cachesize 150 dnsmasq: compile time options: IPv6 GNU-getopt DBus i18n IDN2 DHCP DHCPv6 no-Lua TFTP conntrack ipset auth DNSSEC loop-detect inotify dumpfile dnsmasq: reading /etc/resolv.conf dnsmasq: using nameserver 188.190.254.254#53 dnsmasq: using nameserver 31.43.120.254#53 dnsmasq: using nameserver 1.1.1.1#53 dnsmasq: read /etc/hosts - 2 addresses

[/simterm]

Check it:

[simterm]

$ dig @localhost ya.ru +short 87.250.250.242

[/simterm]

Check its output:

[simterm]

... dnsmasq: query[A] ya.ru from ::1 dnsmasq: forwarded ya.ru to 188.190.254.254 dnsmasq: forwarded ya.ru to 31.43.120.254 dnsmasq: forwarded ya.ru to 1.1.1.1 dnsmasq: reply ya.ru is 87.250.250.242 ...

[/simterm]

Repeat the same request again – and again check STDOUT:

[simterm]

... dnsmasq: query[A] ya.ru from ::1 dnsmasq: cached ya.ru is 87.250.250.242 ...

[/simterm]

On the first attempt, the ya.ru domain wasn’t found in the cache and dnsmasq forwarded it to its upstream servers.

On the second request – value was returned from the cache.

You can also add extra value to the log-queries option to add a serial number (actually – it will be an internal port) to each request – can be useful when you have a lot of records:

[simterm]

$ sudo dnsmasq --log-queries=extra -d dnsmasq: started, version 2.80 cachesize 150 dnsmasq: compile time options: IPv6 GNU-getopt DBus i18n IDN2 DHCP DHCPv6 no-Lua TFTP conntrack ipset auth DNSSEC loop-detect inotify dumpfile dnsmasq: reading /etc/resolv.conf dnsmasq: using nameserver 188.190.254.254#53 dnsmasq: using nameserver 31.43.120.254#53 dnsmasq: using nameserver 1.1.1.1#53 dnsmasq: read /etc/hosts - 2 addresses dnsmasq: 1 ::1/49217 query[A] ya.ru from ::1 dnsmasq: 1 ::1/49217 forwarded ya.ru to 188.190.254.254 dnsmasq: 1 ::1/49217 forwarded ya.ru to 31.43.120.254 dnsmasq: 1 ::1/49217 forwarded ya.ru to 1.1.1.1 dnsmasq: 1 ::1/49217 reply ya.ru is 87.250.250.242 dnsmasq: 2 ::1/42356 query[A] ya.ru from ::1 dnsmasq: 2 ::1/42356 cached ya.ru is 87.250.250.242

[/simterm]

dnsmasq logging

Besides the monitoring – we also configured our Loki to collect dnsmasq‘s (see the Grafana Labs: Loki – logs collecting and monitoring system post).

The logging option is log-facility where you can set a syslog‘s channel: by default DAEMON and LOCAL0 if using -d (–no-daemon), see the syslog facility.

Also, instead of using syslog you can just set a log-file to be used:

log-facility="/var/log/dnsmasq.log"

And add log-queries if you want to see all the requests served.

Check the log-async if you have a lot of logs passed via the syslog.

resolv.conf and resolv.dnsmasq

Maybe a good idea to move out upstream-servers from a hosts’ resolv.conf and leave only dnsmasq‘s address there to make it the only one DNS for a system.

Also, in this way you can have a dynamic resolv.conf, for example, if it is periodically updated by the NetworkManager.

So, update you resolv.conf:

[simterm]

root@bttrm-production-console:/home/admin# cat /etc/resolv.conf domain us-east-2.compute.internal search us-east-2.compute.internal nameserver 127.0.0.1

[/simterm]

Now, create a new file called resolv.dnsmasq and set upstreams here:

[simterm]

root@bttrm-production-console:/home/admin# cat /etc/resolv.dnsmasq nameserver 10.0.3.2 nameserver 1.1.1.1

[/simterm]

Next, update the /etc/dnsmasq.conf config and add the --resolv-file="/etc/resolv.dnsmasq" line:

... resolv-file="/etc/resolv.dnsmasq" ...

Check the config’s syntax:

[simterm]

root@bttrm-production-console:/home/admin# dnsmasq --test dnsmasq: syntax check OK.

[/simterm]

--servers

Another solution could be to set upstream servers directly in the dnsmasq‘s config instead of using /etc/resolv.dnsmasq by adding the server option:

server=10.0.3.2 server=1.1.1.1

Also, in this way you can specify a dedicated DNS server for a specific domain.

For example, to make a resolution for the backend-db2-slave.example.com domain exclusively via the VPC DNS 10.0.3.2 – set it as the following:

... server=/backend-db2-slave.example.com/10.0.3.2 ...

Still, dnsmasq will check for the resolv.conf updates. To disable it – add the no-resolv option.

--strict-order

By default, dnsmasq forwards requests to all upstream-servers, and the first one who will reply – will become a primary – dnsmasq will try to use it at first for next forwards.

This can be any server from the resolv.conf, resolv.dnsmasq or from the server in the dnsmasq‘s config file.

To make dnsmasq use servers exactly as they are added in configs – add the strict-order option.

dnsmasq cache size recommended

And the most interesting question: what is the best cache size for te dnsmasq?

By default, it has 150 records limit, maximum – 10000.

Can be adjusted with the cachesize option:

cachesize=1000

But still – do we need to increase it?

SIGUSR1

To check if you have enough cache size now you can dump dnsmasq‘s data from the moment of its start by using the SIGUSR1 signal:

[simterm]

root@bttrm-production-console:/home/admin# pkill -USR1 dnsmasq && cat /var/log/syslog | grep dns | tail -6 Oct 26 10:53:45 localhost dnsmasq[30433]: time 1572076425 Oct 26 10:53:45 localhost dnsmasq[30433]: cache size 150, 0/45516 cache insertions re-used unexpired cache entries. Oct 26 10:53:45 localhost dnsmasq[30433]: queries forwarded 22241, queries answered locally 633009 Oct 26 10:53:45 localhost dnsmasq[30433]: queries for authoritative zones 0 Oct 26 10:53:45 localhost dnsmasq[30433]: server 10.0.3.2#53: queries sent 11352, retried or failed 0 Oct 26 10:53:45 localhost dnsmasq[30433]: server 1.1.1.1#53: queries sent 46, retried or failed 0

[/simterm]

And here you can seeВ котором мы видимо невероятно много полезной информации:

- time 1572076425 – time since the service started in UNIX epoch format

- cache size 150, 0/45516 cache insertions re-used unexpired cache entries – is exactly what we are looking for: if new records added will push out records already present in the cache and which still have no TTL expired – this is the signal, that cache size is now enough

- queries forwarded 22241, queries answered locally 633009 – a number of the requests which wasn’t found in the cache and forwarded to an upstream server (the value is taken from the

misses.bind), and number of requests which was served by thednsmasq‘s cache (thehits.bind‘s value) - queries for authoritative zones – if using

dnsmasqas SOA – number of requests to those zones - server 10.0.3.2#53: queries sent 11352, retried or failed 0 – is the most interesting line here – in theory, this will help us to see if we have issues on the AWS VPC DNS side when the another “php_network_getaddresses: getaddrinfo failed: Temporary failure in name resolution” error will happen next time

AWS “Temporary failure in name resolution”

By the time of this post writing – we got our lovely errors again.

It looks like the next (dnsmasq‘s metrics):

But still, in its statistic – everything is just perfect:

[simterm]

root@bttrm-production-console:/home/admin# pkill -USR1 dnsmasq && cat /var/log/syslog | grep dns | tail -6 Oct 26 11:12:36 localhost dnsmasq[30433]: time 1572077556 Oct 26 11:12:36 localhost dnsmasq[30433]: cache size 150, 0/49227 cache insertions re-used unexpired cache entries. Oct 26 11:12:36 localhost dnsmasq[30433]: queries forwarded 24327, queries answered locally 724600 Oct 26 11:12:36 localhost dnsmasq[30433]: queries for authoritative zones 0 Oct 26 11:12:36 localhost dnsmasq[30433]: server 10.0.3.2#53: queries sent 12949, retried or failed 0 Oct 26 11:12:36 localhost dnsmasq[30433]: server 1.1.1.1#53: queries sent 590, retried or failed 0

[/simterm]

No VPC DNS failures, no cache issues.

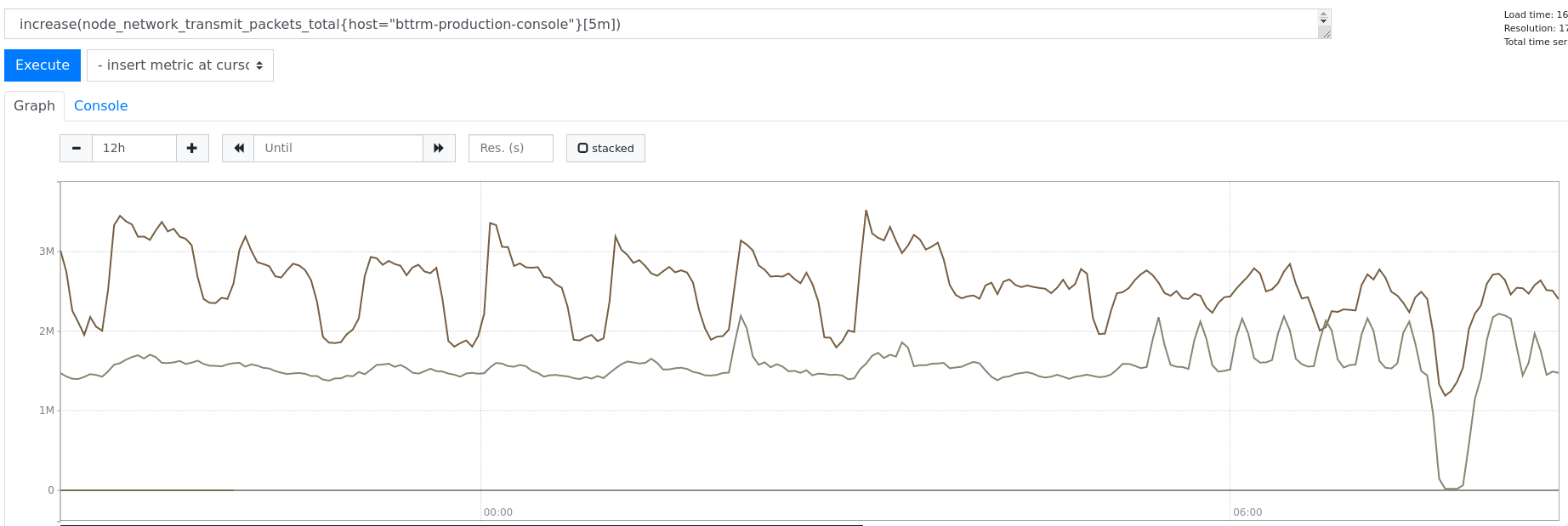

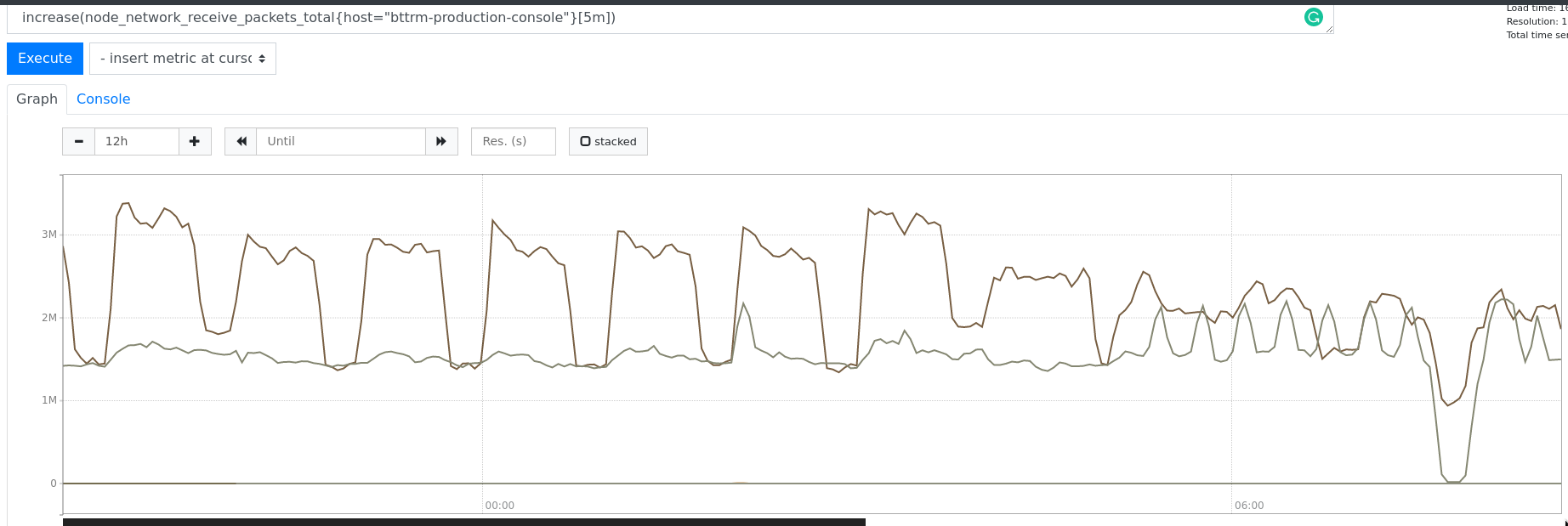

But I found, that number of packets sent dropped almost to zero (24 hours graph here):

And received:

Not sure yet what this means.

And why are all services operating normally? We saw the only DNS errors…

Well… no idea for now, but now it looks more like the network problem then DNS in general or dnsmasq in particular.

Useful links

![]()