Istio is a Service Mesh solution that allows performing Service Discovery, Load Balancing, traffic control, canary rollouts and blue-green deployments, traffic monitoring between microservices.

Istio is a Service Mesh solution that allows performing Service Discovery, Load Balancing, traffic control, canary rollouts and blue-green deployments, traffic monitoring between microservices.

We will use Istio in our AWS Elastic Kubernetes Service for traffic monitoring, as an API Gateway service, for traffic policies, and for various deployment strategies.

In this post, will speak about the Service Mesh concept in general, then will take an overview of Istio architecture and components, its installation process, and how to run a test application.

Contents

Service Mesh

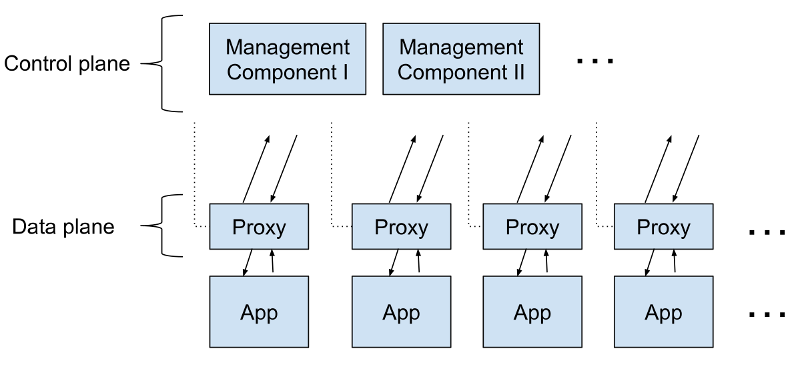

Essentially, it’s a proxy-services manager. As a proxy service there can be systems like NGINX, HAProxy, or Envoy, working on the Network OSI Layer 7, that allows for dynamic traffic control and applications communication configuration.

Service mesh performs a new applications/services discovery, load-balancing, authentication, and traffic encryption.

For the traffic control in a service mesh for each application or in the case of Kubernetes for each pod, a proxy service called sidecar is started alongside the application.

Together those sidecar containers are known as a Data Plane.

For their configuration and management, there is another process group called Control Plane. They are used for new applications discovery, encryption keys management, metrics collection and aggregation, and so on.

A service mesh can be displayed as such a scheme:

Among many Service mesh solutions I’d mention the followings:

Read more (all links in Rus):

- Что такое Service Mesh?

- Service Mesh: что нужно знать каждому Software Engineer о самой хайповой технологии

- Что такое service mesh и почему он мне нужен [для облачного приложения с микросервисами]?

- Service mesh — это всё ещё сложно

Istio architecture

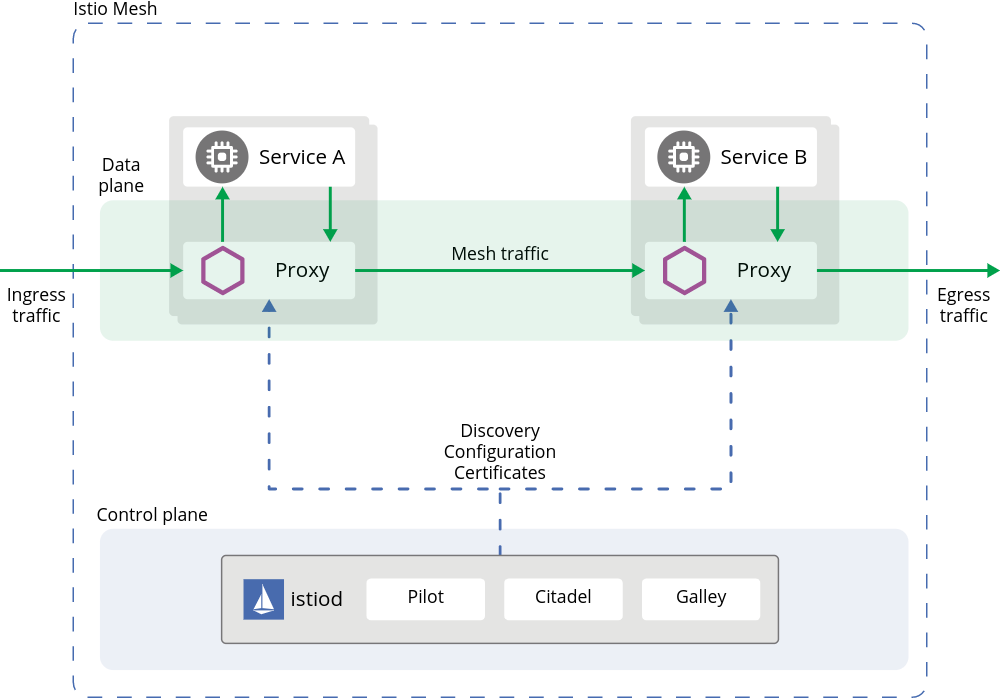

So, Istio as a service mesh consists of two main parts – the Data plane and Control plane:

- Data plane (“a data layer”): contains a collection of proxy services represented as sidecar containers in each Kubernetes Pod, using an extended Envoy proxy server. Those sidecars links and controls traffic between applications, collects and sens metrics

- Control plane (“control layer”): manages and configures sidecars, aggregates monitoring metrics, TLS certificates management

Isitio architecture can be represented as the following diagram:

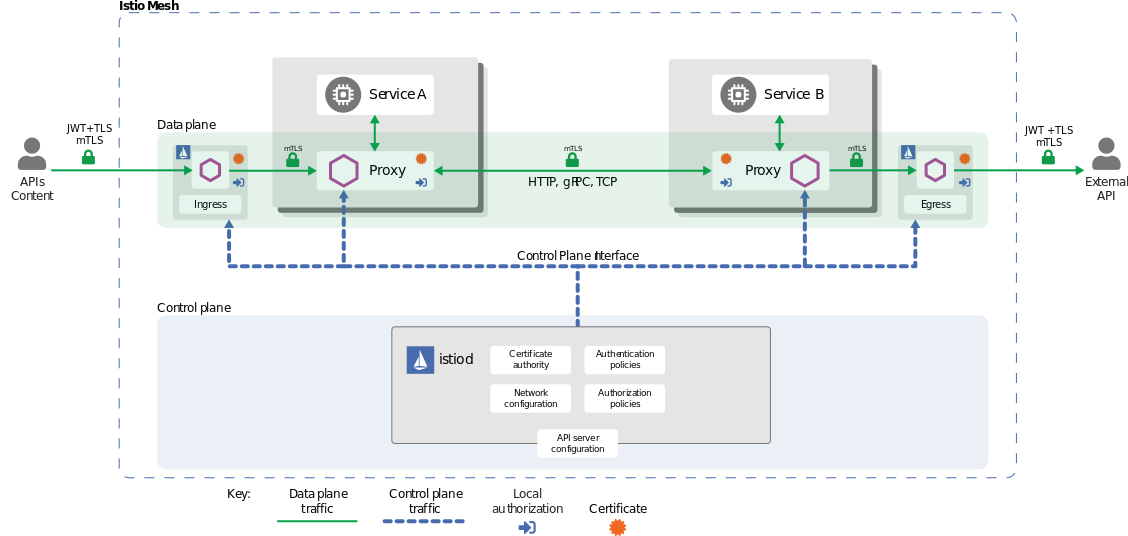

Or another one:

Control Plane

Istio Control Plane inculdes for main componentes:

- Pilot: central controller responsible for communication with sidecars using Envoy API. It reads rules described in istio manifests and sends them to Envoy proxies to configure them. Also, it is used for the service discovery, traffic control, routing, network resistance ability with timeouts and circuit breaking.

- Citadel: Identity and Access management – traffic encryption, users and services authentification, TLS keys management. See Istio Security.

- Galley: configuration management – validates new configs and sends them over mesh

- Mixer: monitoring, metrics, logs, traffic control

Since the Istio version 1.9.1 they all, excluding Pilot, which is running in a dedicated Docker container, are built as a single binary file called istiod, plus additional Ingress and Egress Controllers.

Data Plane

Consist of sidecar containers running as additional processes which are running in Kubernetes via kube-inject, see the Installing the Sidecar.

These containers and Envoy proxy instances allow to:

- Dynamic service discovery

- Load balancing

- TLS termination

- HTTP/2 and gRPC proxies

- Circuit breakers

- Health checks

- Deployment staged rollouts

- Rich metrics

Read more:

Istio network model

Before going to run our Istio – let’s take a brief overview of the resources used to manage traffic.

During installation, Istio creates an Ingress Gateway service (and Egress Gateway, if this was set during the installation) – a new Kubernetes object described as Kubernetes CRD during Istio install.

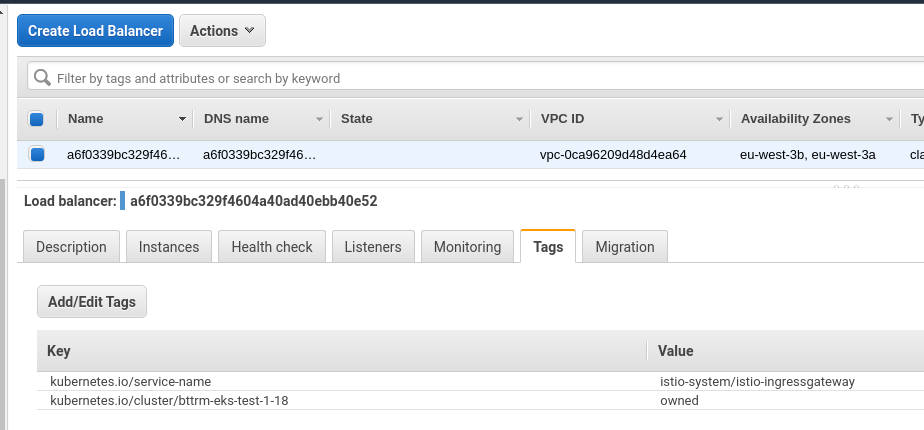

In AWS with default settings, Ingress Gateway will create an AWS Classic LoadBalancer, as Ingress Gateway is represented as Kubernetes Ingress object with the LoadBalancer type.

“Behind” an Ingress Gateway is another resource is created – the Gateway, which is also described as Kubernetes CRD during installation and used to describe hosts and ports used to send traffic with this Gateway.

Then, another resource is going to the scene – the VirtualService, which describes traffic routing sent over a Gateway and sends it to Kubernetes Services, as it’s described in the Destination rules.

Ingress Gateway consists of two parts: a Kubernetes Pod with Envoy instance which controls traffic, and a Kubernetes Ingress, which accepts new connections.

In its turn Gateway and VirtualService configures Envoy proxy instance used as the Ingress Gateway controller.

So, in general, traffic flow is the following:

- a packet is coming to an external Load Balancer and then sent to a Kubernetes WorkerNode TCP port

- there the packet is sent to the Istio IngressGateway Service

- and is redirected to the Istio IngressGateway Pod

- an Envoy instance on this Pod is configured with Gateway and VirtualService

- a Gateway describes ports, protocols, SSL certificates

- VirtualService describes traffic routing to a Kubernetes Service of our application

- Istio IngressGateway Pod sends this packet to the application’s Service

- and the Service routes the packet to an application’s Pod

Read more:

Running Istio in Kubernetes

Istio supports different Deployment models. In this current case, we are using one AWS Elastic Kubernetes Service cluster, and all pods are running in the same VPC network.

Also, there are different Config Profiles which has pre-configured components set to be installed. Here, we can be interested in the default – install istiod and istio-ingressgateway, demo – similar, but also will install theistio-egressgateway service, and preview – to investigate new abilities that are not yet included in the main Istion release.

You can install Istio in various ways: using the istioctl utility and by using manifest files, using Helm, or with Ansible.

Worth paying attention to the Mutual TLS (mTLS) – see the Permissive mode. In short: by default, Istion is installed in the Permissive mTLS mode, which allows existing applications to communicate using plaintext traffic. But all new connections via Envoy proxies will be made with TLS encryption.

For now, let’s install Istio manually with istioctl, and later on the Dev and Production clusters will install with Ansible and its helm module.

Read more:

Download Istio:

[simterm]

$ curl -L https://istio.io/downloadIstio | sh - $ cd istio-1.9.1/

[/simterm]

istioctl uses the ~/.kube/config file or you can specify your own by using the --kubeconfig option, or set another context with --context.

The istioctl file located in the bin – add it to the $PATH variable (Linux/macOS:

[simterm]

$ export PATH=$PWD/bin:$PATH

[/simterm]

Check if it’s working:

[simterm]

$ istioctl version no running Istio pods in "istio-system" 1.9.1

[/simterm]

Generate a kubeconfig for the testing cluster, if need (if using Minicube, it will be generated automatically):

[simterm]

$ aws eks update-kubeconfig --name bttrm-eks-test-1-18 --alias iam-bttrm-eks-root-role-kubectl@bttrm-eks-test-1-18 Added new context iam-bttrm-eks-root-role-kubectl@bttrm-eks-test-1-18 to /home/setevoy/.kube/config

[/simterm]

And install Istio with the default profile:

[simterm]

$ istioctl install --set profile=default -y ✔ Istio core installed ✔ Istiod installed ✔ Ingress gateways installed ✔ Installation complete

[/simterm]

Check versions again:

[simterm]

$ istioctl version client version: 1.9.1 control plane version: 1.9.1 data plane version: 1.9.1 (1 proxies)

[/simterm]

And Istio pods:

[simterm]

$ kubectl -n istio-system get pod NAME READY STATUS RESTARTS AGE istio-ingressgateway-d45fb4b48-jsz9z 1/1 Running 0 64s istiod-7475457497-6xskm 1/1 Running 0 77s

[/simterm]

Now, let’s deploy a test application and will configure routing via Istio Ingress Gateway.

Running test application

We will not use the default Bookinfo from the Istio Gettings Started guide, instead let’s define our own Namespace, a Deployment with one pod with NGINX, and a Service – I’d like to emulate already existing applications that need to be migrated under Istio control.

Also, at this moment we will not configure automated sidecars injection – will go back to this later.

A manifest looks like the following:

---

apiVersion: v1

kind: Namespace

metadata:

name: test-namespace

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: test-deployment

namespace: test-namespace

labels:

app: test

version: v1

spec:

replicas: 1

selector:

matchLabels:

app: test

template:

metadata:

labels:

app: test

version: v1

spec:

containers:

- name: web

image: nginx

ports:

- containerPort: 80

resources:

requests:

cpu: 100m

memory: 100Mi

readinessProbe:

httpGet:

path: /

port: 80

nodeSelector:

role: common-workers

---

apiVersion: v1

kind: Service

metadata:

name: test-svc

namespace: test-namespace

spec:

selector:

app: test

ports:

- name: http

protocol: TCP

port: 80

targetPort: 80

It’s recommended to use the version label in applications, as later this will allow implementing canary and blue-green deployment, see the How To Do Canary Deployments With Istio and Kubernetes и Traffic Management.

Deploy it:

[simterm]

$ kubectl apply -f test-istio.yaml namespace/test-namespace created deployment.apps/test-deployment created service/test-svc created

[/simterm]

Check containers in the pod that we created:

[simterm]

$ kk -n tkk -n test-namespace get pod -o jsonpath={.items[*].spec.containers[*].name}

web

[/simterm]

Okay, one pod as it was described in the Deployment above.

Using kubectl port-forward connect to the Service:

[simterm]

$ kubectl -n test-namespace port-forward services/test-svc 8080:80 Forwarding from 127.0.0.1:8080 -> 80 Forwarding from [::1]:8080 -> 80

[/simterm]

And check if our application is working:

[simterm]

$ curl localhost:8080 <!DOCTYPE html> <html> <head> <title>Welcome to nginx!</title> ...

[/simterm]

Nice – here is everything working.

Istio Ingress Gateway

So, at this moment we have an Istio Ingress Gateway created during Istio installation, which is represented by an AWS Classic LoadBalancer:

But if try to access it right now, we will get an error as it has no idea where to route traffic to:

[simterm]

$ curl a6f***037.eu-west-3.elb.amazonaws.com curl: (52) Empty reply from server

[/simterm]

To configure it – need to add an Istio Gateway.

Gateway

The Gateway describes Istio Ingress Gateway config – which ports to use and which traffic to accept. Also, here you can perform SSL termination (but in this case, this is done by an AWS LoadBalancer).

Add a new resource to our test-istio.yaml – the Gateway:

---

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: test-gateway

namespace: test-namespace

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

In the spec.selector.istio we are specifying the Istio Ingress Gateway, for which this manifest will be applied to.

Pay attention, that our application is living in a dedicated namespace, so Gateway and VirtualService (see below) need to be created in the same Namespace.

Create the Gateway:

[simterm]

$ kubectl apply -f test-istio.yaml namespace/test-namespace unchanged deployment.apps/test-deployment unchanged service/test-svc unchanged gateway.networking.istio.io/test-gateway created

[/simterm]

Check it:

[simterm]

$ kubectl -n test-namespace get gateways NAME AGE test-gateway 17s

[/simterm]

VirtualService

Next, we are going to add a VirtualService where we will describe our “backend” where traffic will be sent.

As a “backend” here is just a common Kubernetes Service of our application – the test-svc:

[simterm]

$ kk -n test-namespace get svc NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE test-svc NodePort 172.20.195.107 <none> 80:31581/TCP 15h

[/simterm]

Describe a VirtualService in the same namespace where our application and where we’ve created the Gateway above:

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: test-virtualservice

namespace: test-namespace

spec:

hosts:

- "*"

gateways:

- test-gateway

http:

- match:

- uri:

prefix: /

route:

- destination:

host: test-svc

port:

number: 80

Create it:

[simterm]

$ kubectl apply -f test-istio.yaml namespace/test-namespace unchanged deployment.apps/test-deployment unchanged service/test-svc unchanged gateway.networking.istio.io/test-gateway unchanged virtualservice.networking.istio.io/test-virtualservice created

[/simterm]

Check:

[simterm]

$ kubectl -n test-namespace get virtualservice NAME GATEWAYS HOSTS AGE test-virtualservice [test-gateway] [*] 42s

[/simterm]

And check again the URL of the LoadBalancer of the Istio Ingress Gateway:

[simterm]

$ curl a6f***037.eu-west-3.elb.amazonaws.com <!DOCTYPE html> <html> <head> <title>Welcome to nginx!</title> ...

[/simterm]

And still, we are working without Envoy proxy aka sidecar in our application’s Pod:

[simterm]

$ kk -n tkk -n test-namespace get pod -o jsonpath={.items[*].spec.containers[*].name}

web

[/simterm]

Will check a bit later why this is working.

Kiali – traffic observation

Istio has a lot of addons: Prometheus for metrics collection and alerting, Grafana for metrics visualization, Jaeger for requests tracing, and Kiali to build a map of network and services. Read more at Integrations.

Install all addons:

[simterm]

$ kubectl apply -f samples/addons

[/simterm]

And execute istioctl dashboard kiali – Kiali will be opened in a default browser:

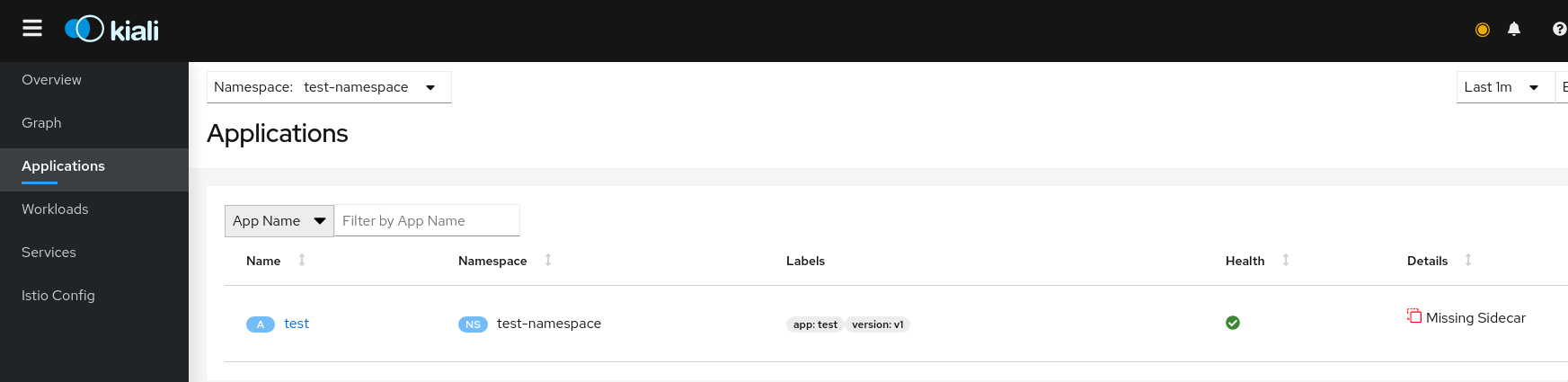

But, if navigate to the Applications, we will see that our application marked as “Missing Sidecar“:

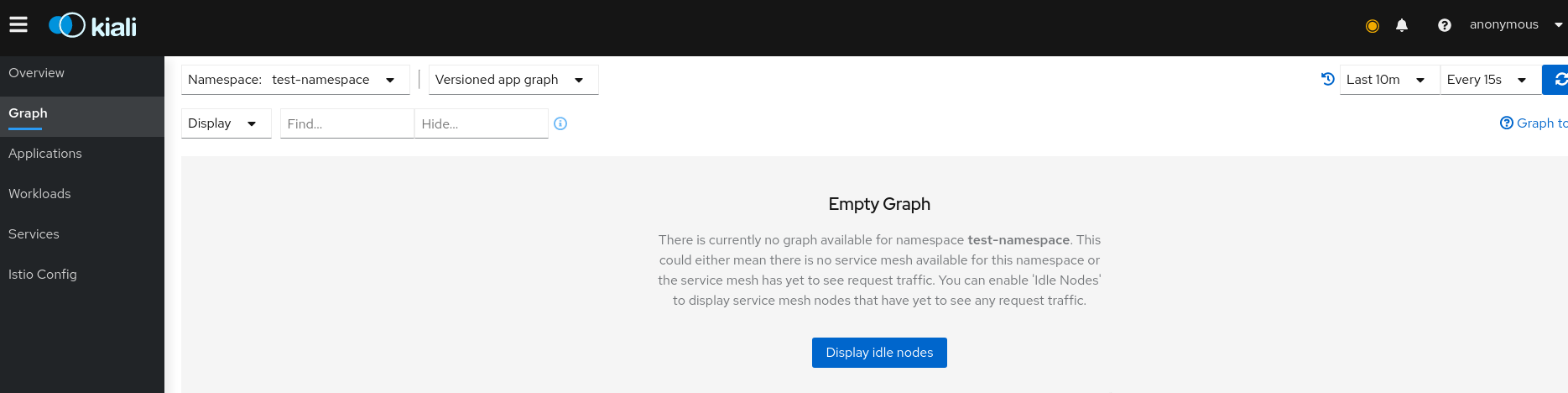

And no services map the Graph:

Sidecar – Envoy proxy

As we remember, our pod has no container with Envoy instance although the network is working, because at this moment Istio via iptables rules sends traffic directly to the container with NGINX. Read more at Traffic flow from application container to sidecar proxy.

Iptalbes rules are configured with an additional InitContainer – istio-init – when a pod is started, but at this moment they are default, configured with kube-proxy during our application deployment. Check the Kubernetes: Service, load balancing, kube-proxy, and iptables post for more details.

In the following post we will dive deeper into the Istio networking, and for now, let’s just add sidecars injection to our Namespace’s Pods and compare Iptables rules before and after.

Istio and iptables

Go to check Iptables rules before we will add a sidecar and istio-init.

Connect via SSH to a Kubernetes WorkerNode where your Pod is living and find NGINX Docker container:

[simterm]

[root@ip-10-22-35-66 ec2-user]# docker ps | grep nginx 22d64b132490 nginx "/docker-entrypoint.…" 3 minutes ago Up 3 minutes k8s_web_test-deployment-6864c5bf84-mk98r_test-namespace_8b88caf0-237a-4c94-ac71-186f8e701a7c_0

[/simterm]

Find a PID of the process in this container:

[simterm]

[root@ip-10-22-35-66 ec2-user]# docker top k8s_web_test-deployment-6864c5bf84-mk98r_test-namespace_8b88caf0-237a-4c94-ac71-186f8e701a7c_0 UID PID PPID C STIME TTY TIME CMD root 31548 31517 0 10:36 ? 00:00:00 nginx: master process nginx -g daemon off; 101 31591 31548 0 10:36 ? 00:00:00 nginx: worker process

[/simterm]

Using the nsenter utility check Ipables rules on the network-namespace of the process with PID 31548 – nothing unusual here, for now – all traffic is just sent directly to our container:

[simterm]

[root@ip-10-22-35-66 ec2-user]# nsenter -t 31548 -n iptables -t nat -L Chain PREROUTING (policy ACCEPT) target prot opt source destination Chain INPUT (policy ACCEPT) target prot opt source destination Chain OUTPUT (policy ACCEPT) target prot opt source destination Chain POSTROUTING (policy ACCEPT) target prot opt source destination

[/simterm]

Running Sidecar

Documentation – Installing the Sidecar.

To automatically inject Envoy proxy instances to pods in the test-namespace namespace run the following:

[simterm]

$ kubectl label namespace test-namespace istio-injection=enabled namespace/test-namespace labeled

[/simterm]

Check the labels:

[simterm]

$ kubectl get namespace test-namespace --show-labels NAME STATUS AGE LABELS test-namespace Active 11m istio-injection=enabled

[/simterm]

But sidecars will be added only for new pods in this namespace.

We can add them manually by using the kube-inject, or just by recreating the pod:

[simterm]

$ kubectl -n test-namespace scale deployment test-deployment --replicas=0 deployment.apps/test-deployment scaled $ kubectl -n test-namespace scale deployment test-deployment --replicas=1 deployment.apps/test-deployment scaled

[/simterm]

Check containers in the Pod again:

[simterm]

$ kubectl -n test-namespace get pod -o jsonpath={.items[*].spec.containers[*].name}

web istio-proxy

[/simterm]

Now we can see the istio-proxy container – this is pour sidecar container with Envoy.

Also, check initContainers of the Pod:

[simterm]

$ kk -n test-namespace get pod -o jsonpath={.items[*].spec.initContainers[*].name}

istio-init

[/simterm]

And check Iptables rules again – find the container, its PID, and check the rules:

[simterm]

[root@ip-10-22-35-66 ec2-user]# nsenter -t 4194 -n iptables -t nat -L Chain PREROUTING (policy ACCEPT) target prot opt source destination ISTIO_INBOUND tcp -- anywhere anywhere Chain INPUT (policy ACCEPT) target prot opt source destination Chain OUTPUT (policy ACCEPT) target prot opt source destination ISTIO_OUTPUT tcp -- anywhere anywhere Chain POSTROUTING (policy ACCEPT) target prot opt source destination Chain ISTIO_INBOUND (1 references) target prot opt source destination RETURN tcp -- anywhere anywhere tcp dpt:15008 RETURN tcp -- anywhere anywhere tcp dpt:ssh RETURN tcp -- anywhere anywhere tcp dpt:15090 RETURN tcp -- anywhere anywhere tcp dpt:15021 RETURN tcp -- anywhere anywhere tcp dpt:15020 ISTIO_IN_REDIRECT tcp -- anywhere anywhere Chain ISTIO_IN_REDIRECT (3 references) target prot opt source destination REDIRECT tcp -- anywhere anywhere redir ports 15006 Chain ISTIO_OUTPUT (1 references) target prot opt source destination RETURN all -- ip-127-0-0-6.eu-west-3.compute.internal anywhere ISTIO_IN_REDIRECT all -- anywhere !localhost owner UID match 1337 RETURN all -- anywhere anywhere ! owner UID match 1337 RETURN all -- anywhere anywhere owner UID match 1337 ISTIO_IN_REDIRECT all -- anywhere !localhost owner GID match 1337 RETURN all -- anywhere anywhere ! owner GID match 1337 RETURN all -- anywhere anywhere owner GID match 1337 RETURN all -- anywhere localhost ISTIO_REDIRECT all -- anywhere anywhere Chain ISTIO_REDIRECT (1 references) target prot opt source destination REDIRECT tcp -- anywhere anywhere redir ports 15001

[/simterm]

Now we can see additional Iptables chains and rules created by Isio, which sends traffic to the Envoy sidecar container and then to the application’s container and back.

Go back to the Kiali dashboard and check the map:

And requests tracing:

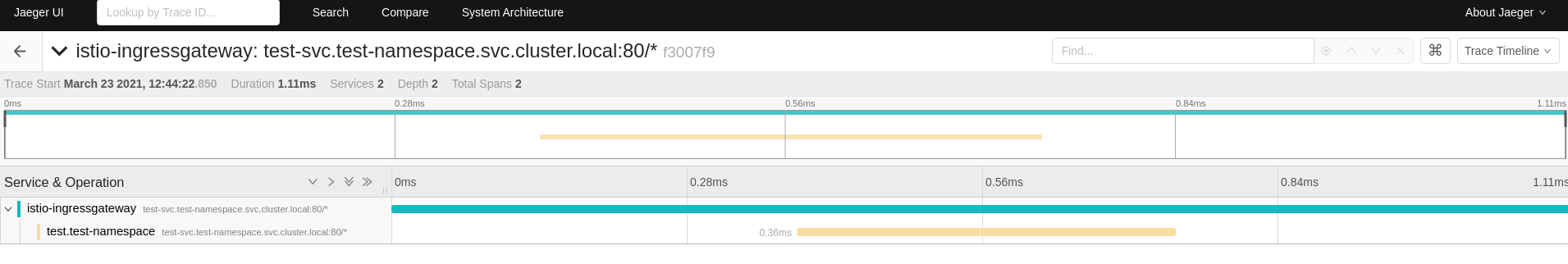

Traces are also available via the Jaeger dashboard which can be accessed with istioctl dashboard jaeger:

Also, with istioctl dashboard prometheus you can open Prometheus and check available metrics, see more at Querying Metrics from Prometheus:

Actually, that’s all for now.

Later, we will integrate Istio with AWS Application LoadBalncer, deploy and configuration of an Isitio instance to the AWS Elastic Kubernetes Service with Ansible and Helm, Gateway and VirtualServices configuration and debugging.

Useful links

-

- Learn Istio using Interactive Browser-Based Scenarios – basic course on Katacoda

- Istio Service Mesh Workshop – Istio overview

- Service Mesh with Istio – one more workshop, from AWS EKS

- How Istio Works Behind the Scenes on Kubernetes – architecture, components

- Debugging Envoy and Istiod – debugging Istio with

istioctl - Starting with Istio, see also Istio, Part II и Istio, Part III, why use it? – overview, architecture, network in Istio

- North-South Traffic Management of Istio Gateways (with Answers from Service Mesh Experts) – network in Istio

- A Crash Course For Running Istio – Istio, Envoy, iptables, components in Istio, very nice post

- How to Make Istio Work with Your Apps – troubleshooting and proxy-status examples

- Reducing Istio proxy resource consumption with outbound traffic restrictions – resources in Istio and sidecars tuning

- Life of a Packet through Istio – seems to be not a bad networking overview in Istio, but I didn’t watch the video

- Sidecar injection and transparent traffic hijacking process in Istio explained in detail – sidecars, iptables, and routing

- An in-depth intro to Istio Ingress – Ingress, Gateway, and VirtualService, examples

- Understanding Istio Ingress Gateway in Kubernetes – the same as above

- Istio Gateway – the same as above

- Getting started with Istio and next parts – Istio in Practice – Ingress Gateway, Istio in Practice – Routing with VirtualService

- 4 Istio Gateway: getting traffic into your cluster – again about Gateway and VirtualService

![]()